Seedance 2 Image to Video

ByteDance's most advanced video generation model. Cinematic output with native audio, real-world physics, and director-level camera control. Accepts text, image, audio, and video inputs.

Nano Banana 2

Nano Banana 2 is Google's new state-of-the-art fast image generation and editing model

![Kling Video v3 Image to Video [Pro]](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a8cfd08%2FJi4e0i6Afbeql3Wr5UTz6_ab60b14661424612bf19059e97e996a5.jpg/tr:w-1920,q-80/Ji4e0i6Afbeql3Wr5UTz6_ab60b14661424612bf19059e97e996a5.webp)

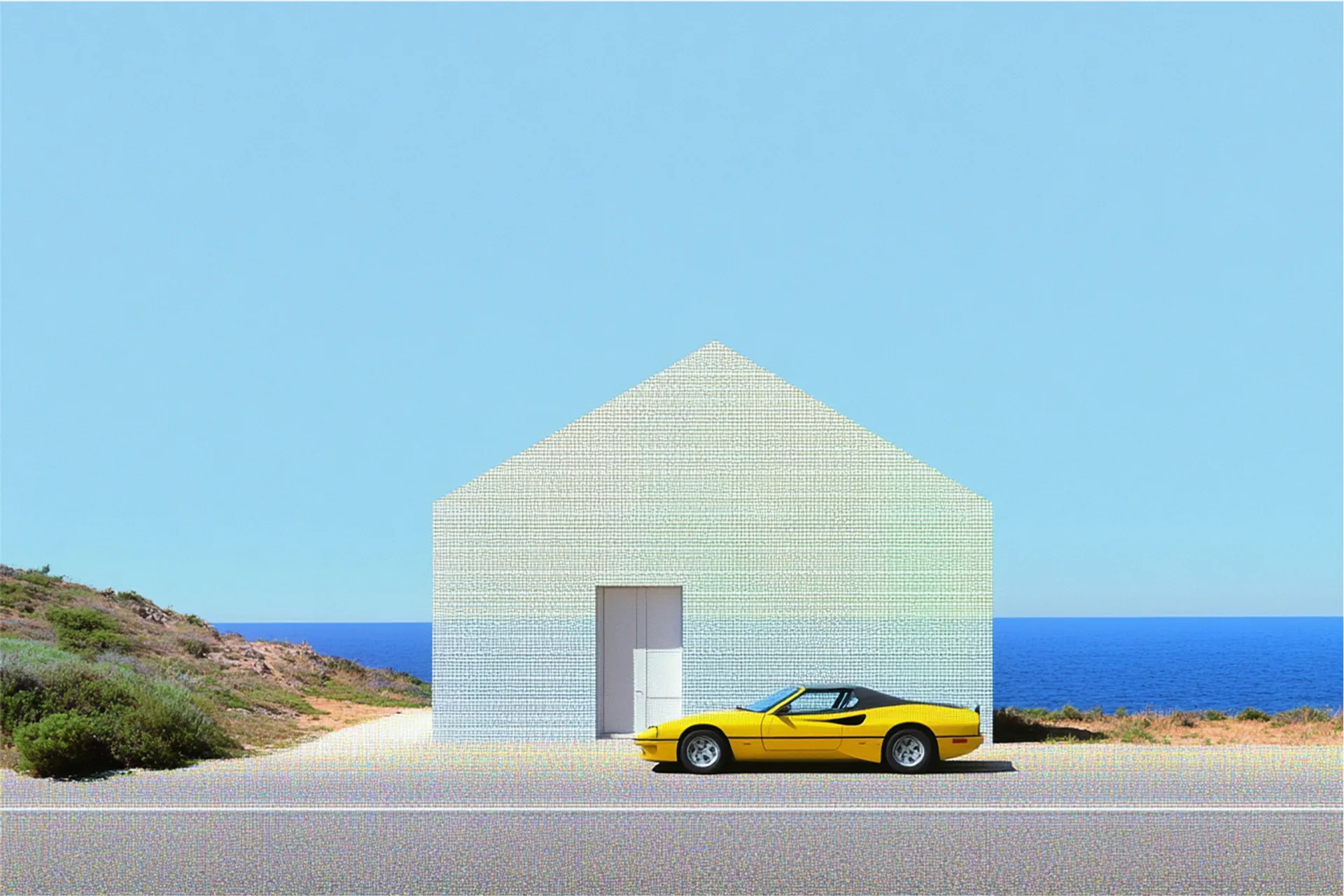

Kling Video v3 Image to Video [Pro]

Kling 3.0 Pro: Top-tier image-to-video with cinematic visuals, fluid motion, and native audio generation, with custom element support.

Happy Horse

Alibaba's #1-ranked Happy Horse 1.0 — generate 1080p video with synchronized native audio and multilingual lip-sync from text prompts or images.

PixVerse V6

PixVerse V6 delivers lifelike physics and striking visuals to elevate your video creation.

Trending

Models that are popular with developers right now.

Seedance 2.0

The new sota video model by Bytedance. Access the new stunning video generation model today.

New and Noteworthy

State-of-the-art models we think you'll love!

Recently Added

Newly added models across image, video, audio, and more.

Best AI Image Generators

Unlock the future of creativity with these text to image, AI image generator models.

Best Image Editing Models

The fan favorite best image editing models on the market

Best of Open Source

Some of our favorite open source media models

Text To Speech APIs

Create lifelike speech with our AI text to speech APIs

AI Image Generator APIs

Generate a variety of stunning images using our AI Image Generator APIs

Text to Video APIs

Access the top Text to Video APIs with lightning fast inference speeds

Best Image Models

Top-performing models for high-quality image generation and editing.

Background Remover APIs

Find the API of your choice to remove a background from your image or video

Marquee Video Models

Flagship video generation models known for top-tier quality, motion control, and cinematic results.

Best Avatar Models

Top models for generating talking avatars, lip-sync videos, and expressive character performances.

Audio Models

Models for speech, music, sound effects, and audio generation across a wide range of use cases.

Text to Music APIs

Everything you need to start making music with AI

Best Lora Trainers

Training endpoints for creating and fine-tuning custom LoRA models for personalization and style adaptation.

Virtual Try On APIs

Virtually try on different outfits and character styles with our collection of APIs.

Image to 3D Model APIs

Run the best image-to-3D models on fal

Text to 3D Model APIs

This is our collection of the best text-to-3D model APIs available on fal.

Best Utility Models

Specialized models for supporting tasks like background removal, nsfw detection, upscaling and much more.

Text To Image APIs

Use the latest state of the art text to image model APIs

![FLUX.1 [schnell] is a 12 billion parameter flow transformer that generates high-quality images from text in 1 to 4 steps, suitable for personal and commercial use.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a9af64d%2FGxvCUPd3gO-MSYcy06g0x_1641cfe028c2429b8e12e4fc320eb0a8.jpg/tr:w-1920,q-80/GxvCUPd3gO-MSYcy06g0x_1641cfe028c2429b8e12e4fc320eb0a8.webp)

![FLUX.1 [dev] is a 12 billion parameter flow transformer that generates high-quality images from text. It is suitable for personal and commercial use.](https://refinery.fal.media/url/https%3A%2F%2Fstorage.googleapis.com%2Ffal_cdn%2Ffal%2FUpscale-1.jpeg/tr:w-1920,q-80/Upscale-1.webp)

![Image editing with FLUX.2 [pro] from Black Forest Labs. Ideal for high-quality image manipulation, style transfer, and sequential editing workflows](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2Fpenguin%2FUfryXXm9my6IM8HsoP9FL_054c2c2953dc491996904114c6e04836.jpg/tr:w-1920,q-80/UfryXXm9my6IM8HsoP9FL_054c2c2953dc491996904114c6e04836.webp)

![FLUX1.1 [pro] is an enhanced version of FLUX.1 [pro], improved image generation capabilities, delivering superior composition, detail, and artistic fidelity compared to its predecessor.](https://refinery.fal.media/url/https%3A%2F%2Fstorage.googleapis.com%2Ffalserverless%2Fgallery%2Fturbo_thumbnail.jpg/tr:w-1920,q-80/turbo_thumbnail.webp)

![FLUX.1 Kontext [pro] handles both text and reference images as inputs, seamlessly enabling targeted, local edits and complex transformations of entire scenes.](https://refinery.fal.media/url/https%3A%2F%2Fstorage.googleapis.com%2Ffal_cdn%2Ffal%2FTraining-2.jpg/tr:w-1920,q-80/Training-2.webp)

![Text-to-image generation with FLUX.2 [pro] from Black Forest Labs. Optimized for maximum quality, exceptional photorealism and artistic images.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2Fpenguin%2FeZetcrsZI6AQLCD3f5gaI_b173ae004bdd4108bd1be54eb6e49c7a.jpg/tr:w-1920,q-80/eZetcrsZI6AQLCD3f5gaI_b173ae004bdd4108bd1be54eb6e49c7a.webp)

![Super fast endpoint for the FLUX.1 [dev] model with LoRA support, enabling rapid and high-quality image generation using pre-trained LoRA adaptations for personalization, specific styles, brand identities, and product-specific outputs.](https://refinery.fal.media/url/https%3A%2F%2Ffal.media%2Ffiles%2Felephant%2FRqIQsOY3cgQMMtCedJKlf_c2fc262516d24b94afdc17a747292710.jpg/tr:w-1920,q-80/RqIQsOY3cgQMMtCedJKlf_c2fc262516d24b94afdc17a747292710.webp)

![FLUX1.1 [pro] ultra is the newest version of FLUX1.1 [pro], maintaining professional-grade image quality while delivering up to 2K resolution with improved photo realism.](https://refinery.fal.media/url/https%3A%2F%2Fstorage.googleapis.com%2Ffalserverless%2Fgallery%2Fflux-pro-v1-1-ultra.webp/tr:w-1920,q-80/flux-pro-v1-1-ultra.webp)

![Text-to-image generation with FLUX.2 [klein] 9B from Black Forest Labs. Enhanced realism, crisper text generation, and native editing capabilities.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a8a7f3c%2F90FKDpwtSCZTqOu0jUI-V_64c1a6ec0f9343908d9efa61b7f2444b.jpg/tr:w-1920,q-80/90FKDpwtSCZTqOu0jUI-V_64c1a6ec0f9343908d9efa61b7f2444b.webp)

![Text-to-image generation with FLUX.2 [dev] from Black Forest Labs. Enhanced realism, crisper text generation, and native editing capabilities.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2Fpenguin%2FzSBCJtPpeIQwR5AC_IamX_b1e1137961754e4d851907c21f8c20cd.jpg/tr:w-1920,q-80/zSBCJtPpeIQwR5AC_IamX_b1e1137961754e4d851907c21f8c20cd.webp)

![Fast endpoint for the FLUX.1 Kontext [dev] model with LoRA support, enabling rapid and high-quality image editing using pre-trained LoRA adaptations for specific styles, brand identities, and product-specific outputs.](https://refinery.fal.media/url/https%3A%2F%2Fstorage.googleapis.com%2Ffal_cdn%2Ffal%2FUpscale-3.jpeg/tr:w-1920,q-80/Upscale-3.webp)

![LoRA trainer for FLUX.1 Kontext [dev]](https://refinery.fal.media/url/https%3A%2F%2Fv3.fal.media%2Ffiles%2Fmonkey%2FpYXiffttc2Skv36wflufu_dec4efe0d27e4527b64acfbc0e91536a.jpg/tr:w-1920,q-80/pYXiffttc2Skv36wflufu_dec4efe0d27e4527b64acfbc0e91536a.webp)

![Super fast endpoint for the FLUX.1 [dev] model with LoRA support, enabling rapid and high-quality image generation using pre-trained LoRA adaptations for personalization, specific styles, brand identities, and product-specific outputs.](https://refinery.fal.media/url/https%3A%2F%2Ffal.media%2Ffiles%2Fzebra%2F6CK9OSIhC3AAEhihyCqsh_9a84ecc9c66343d688ccdd5c9f57c80b.jpg/tr:w-1920,q-80/6CK9OSIhC3AAEhihyCqsh_9a84ecc9c66343d688ccdd5c9f57c80b.webp)

![Text-to-image generation with FLUX.2 [dev] from Black Forest Labs. Enhanced realism, crisper text generation, and native editing capabilities—all at turbo speed.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a871494%2Fj8F-tmy_dz4TyImvIHj19_510cc93373ef451386734b7e05711de1.jpg/tr:w-1920,q-80/j8F-tmy_dz4TyImvIHj19_510cc93373ef451386734b7e05711de1.webp)

![Text-to-image generation with FLUX.2 [dev] from Black Forest Labs. Enhanced realism, crisper text generation, and native editing capabilities— in a flash.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a871486%2FtX7YdfQViGtCE7ZjxOCph_5f5262a21e9e426e8981ea9513d11999.jpg/tr:w-1920,q-80/tX7YdfQViGtCE7ZjxOCph_5f5262a21e9e426e8981ea9513d11999.webp)

![Fastest inference in the world for the 12 billion parameter FLUX.1 [schnell] text-to-image model.](https://refinery.fal.media/url/https%3A%2F%2Fstorage.googleapis.com%2Ffal_cdn%2Ffal%2FUpscale-2.jpg/tr:w-1920,q-80/Upscale-2.webp)

![FLUX.2 [max] delivers state-of-the-art image generation and advanced image editing with exceptional realism, precision, and consistency.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a868a0f%2FzL7LNUIqnPPhZNy_PtHJq_330f66115240460788092cb9523b6aba.jpg/tr:w-1920,q-80/zL7LNUIqnPPhZNy_PtHJq_330f66115240460788092cb9523b6aba.webp)

![Fine-tune FLUX.2 [klein] 4B from Black Forest Labs with custom datasets. Create specialized LoRA adaptations for specific styles and domains.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a8b082e%2FN8Fy12FSedqMd-2Ehh8z1_70e50238ee6b479ebd61270840b4806e.jpg/tr:w-1920,q-80/N8Fy12FSedqMd-2Ehh8z1_70e50238ee6b479ebd61270840b4806e.webp)

![Fine-tune FLUX.2 [klein] 9B from Black Forest Labs with custom datasets. Create specialized LoRA adaptations for specific editing tasks.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a8b082b%2F4dsf0LE8NoXuk9Pz0Ziue_d7c1c380c4d04e03b820d06500a5749f.jpg/tr:w-1920,q-80/4dsf0LE8NoXuk9Pz0Ziue_d7c1c380c4d04e03b820d06500a5749f.webp)

![Fine-tune FLUX.2 [dev] from Black Forest Labs with custom datasets. Create specialized LoRA adaptations for specific styles and domains.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2Ftiger%2FnYv87OHdt503yjlNUk1P3_2551388f5f4e4537b67e8ed436333bca.jpg/tr:w-1920,q-80/nYv87OHdt503yjlNUk1P3_2551388f5f4e4537b67e8ed436333bca.webp)

![The FLUX.1 Kontext [pro] text-to-image delivers state-of-the-art image generation results with unprecedented prompt following, photorealistic rendering, and flawless typography.](https://refinery.fal.media/url/https%3A%2F%2Ffal.media%2Ffiles%2Fzebra%2FVOrzt92hNVLX9m9jB-7-4_deea28b6b45344d4aa4eb3be14b3478e.jpg/tr:w-1920,q-80/VOrzt92hNVLX9m9jB-7-4_deea28b6b45344d4aa4eb3be14b3478e.webp)

![Text-to-image generation with FLUX.2 [flex] from Black Forest Labs. Features adjustable inference steps and guidance scale for fine-tuned control. Enhanced typography and text rendering capabilities.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2Fpanda%2FLqyVE8NElm_vf-t27Yfkz_6c1dd3323df343e4a3ec968d8f67024c.jpg/tr:w-1920,q-80/LqyVE8NElm_vf-t27Yfkz_6c1dd3323df343e4a3ec968d8f67024c.webp)

![Text-to-image generation with LoRA support for FLUX.2 [dev] from Black Forest Labs. Custom style adaptation and fine-tuned model variations.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2Fpanda%2FtOKnFZKepFeCNbgp6-ndM_7aba1231214a4c0e9446a7c2e02a9289.jpg/tr:w-1920,q-80/tOKnFZKepFeCNbgp6-ndM_7aba1231214a4c0e9446a7c2e02a9289.webp)