Seedream is the better default for cost-sensitive production pipelines starting at $0.03/image, while Nano Banana earns its premium for semantic reasoning, character consistency, and web-grounded generation at $0.08/image.

This guide breaks down Nano Banana vs. Seedream, covering architecture, output quality, text rendering, speed, pricing, editing workflows, and the specific use cases each family handles best, so you can pick the right one.

TL;DR

Seedream is the better default for most developers building production image pipelines.

The family starts at $0.03/image on fal, with the newest Seedream 5.0 Lite generating at $0.035/image, and the entire lineup sits at or below $0.04 per generation.

If cost-per-image is your primary constraint, Seedream gives you more headroom at scale.

Nano Banana earns its premium when you need semantic reasoning, character consistency across generations, or web-grounded outputs.

Nano Banana 2 costs $0.08/image at 1K resolution on fal and includes features the Seedream family doesn't offer yet, like thinking modes, web search grounding, and character consistency for up to 5 people without fine-tuning.

Both families are available on fal, where you can test them in the playground or integrate through the API in minutes.

fal gives you access to Nano Banana, Seedream, and hundreds of other models through a single API with pay-per-use pricing and no GPU management.

That means you can compare both families side by side without setting up separate integrations.

Here's how they stack up:

| Nano Banana | Seedream | |

|---|---|---|

| Creator | ByteDance | |

| Architecture | Gemini multimodal foundation models (not diffusion) | Diffusion Transformer (DiT) with VAE |

| Best for | Semantic reasoning, character consistency, web-grounded generation | Cost-effective production, creative advertising, multi-image composition |

| Family price range | $0.039/image (original) to $0.15/image (Pro) | $0.03/image (v3.0, v4.0) to $0.04/image (v4.5) |

| Newest model | Nano Banana 2 (Gemini 3.1 Flash Image), $0.08/image at 1K | Seedream 5.0 Lite, $0.035/image |

| Text rendering | Strong (character-by-character validated, multilingual) | Strong (bilingual Chinese-English, typography-optimized) |

| Editing endpoints | Nano Banana 2 Edit ($0.08/image), Nano Banana Pro Edit ($0.15/image) | Seedream 4.0 Edit ($0.03), 4.5 Edit ($0.04), 5.0 Lite Edit ($0.035) |

| Max resolution | Up to 4K (Nano Banana 2 and Pro) | Up to 4096x4096 (Seedream 4.5) |

| Character consistency | Up to 5 people across generations | Not documented as a standalone feature |

| Web search grounding | +$0.015 per generation (Nano Banana 2 and Pro) | Not available |

| Thinking mode | Minimal and High levels (Nano Banana 2) | Not available |

| Reference images (editing) | Up to 14 images | Up to 10 images |

| Batch generation | 1-4 images per request | 1-6 images per request |

| Output formats | PNG, JPEG, WebP | PNG |

| Watermarking | SynthID digital watermarking on all outputs | Safety checker (enabled by default, configurable via API) |

The Architecture Split: Multimodal LLM vs. Diffusion Transformer

Nano Banana and Seedream take fundamentally different approaches to generating images from text.

Nano Banana is built on Google's Gemini foundation.

The original Nano Banana runs on Gemini 2.5 Flash Image, Nano Banana 2 runs on Gemini 3.1 Flash Image, and Nano Banana Pro runs on Gemini 3 Pro Image. None of them are diffusion models.

That's the first thing worth understanding, because it changes what "prompting" means.

Traditional image generators treat your prompt as a collection of weighted tokens, matching keywords to visual concepts and blending them.

The Gemini architecture processes your prompt through the same multimodal reasoning pipeline that powers conversational AI.

It reasons about composition, lighting, spatial relationships, and creative intent before rendering anything.

Seedream, built by ByteDance, takes the diffusion route but pushes it further than most.

Seedream uses a Diffusion Transformer (DiT) paired with a powerful Variational Autoencoder (VAE).

Earlier versions of the Seedream architecture integrated a large language model as the text encoder, rather than using CLIP or T5, as most diffusion systems do.

This gives Seedream stronger prompt understanding than you'd expect from a typical diffusion model, particularly for bilingual Chinese-English text rendering.

Seedream 4.0 introduced distillation techniques that enabled generation faster than Seedream 3.0 while maintaining quality.

Seedream 4.5 scaled the architecture further with higher resolution support (up to 4096x4096 per the API schema) and refined multi-image composition for preserving subject details across sources.

And Seedream 5.0 Lite pushed the resolution ceiling to 9MP (3072x3072) while keeping the price at $0.035/image.

The practical difference?

Nano Banana reasons about your prompt like a language model.

Seedream diffuses your prompt through an architecture designed for production speed and cost.

Speed: Where the Gap Actually Matters

Speed benchmarks for both families are harder to pin down than you'd expect.

Neither Google nor ByteDance has published comprehensive public benchmarks for their latest models, and the numbers that do exist come from different contexts.

For the Seedream family, the best data point we have at fal is that Seedream 3.0 generates 1K images in roughly 3 seconds.

Per ByteDance's Seed blog, Seedream 4.0's distillation techniques make it over 10 times faster than Seedream 3.0 at high-quality generation, though it can also produce higher-fidelity output at longer inference times when needed.

Seedream 4.5 processes edits in approximately 60 seconds on fal, per the fal repository, though text-to-image generation is faster than editing. Seedream 5.0 Lite doesn't have published generation times, though its "Lite" designation and pricing suggest it prioritizes speed.

For Nano Banana, the original model (Gemini 2.5 Flash Image) was designed for fast generation on the Flash architecture.

Nano Banana 2 inherits this Flash-optimized inference from Gemini 3.1 Flash Image. Nano Banana Pro, built on the heavier Gemini 3 Pro backbone, trades speed for reasoning depth.

Google hasn't published official benchmarks for any of these models, as Pro is explicitly described as "optimized for quality rather than speed metrics."

What we can say: both families offer fast enough generation for most API workflows. The speed difference is most likely to matter in two scenarios.

First, iteration loops. If you're dialing in a prompt over 50 generations, even a few seconds difference per image compounds fast.

Seedream 3.0's documented 3-second generation time makes it one of the fastest options for rapid prototyping.

Second, batch pipelines. If you're generating 500 product images for a catalog, faster models finish the job sooner and cost fewer compute-seconds.

If your workflow depends on real-time features like live previews or interactive editors, test both families in fal's playground to compare actual generation times for your specific prompts and resolutions before committing.

falMODEL APIs

The fastest, cheapest and most reliable way to run genAI models. 1 API, 100s of models

Side-by-Side: Image Comparison Tests

To see how these differences play out visually, here are head-to-head generations from Nano Banana 2 and Seedream 5.0 Lite using identical prompts on fal.

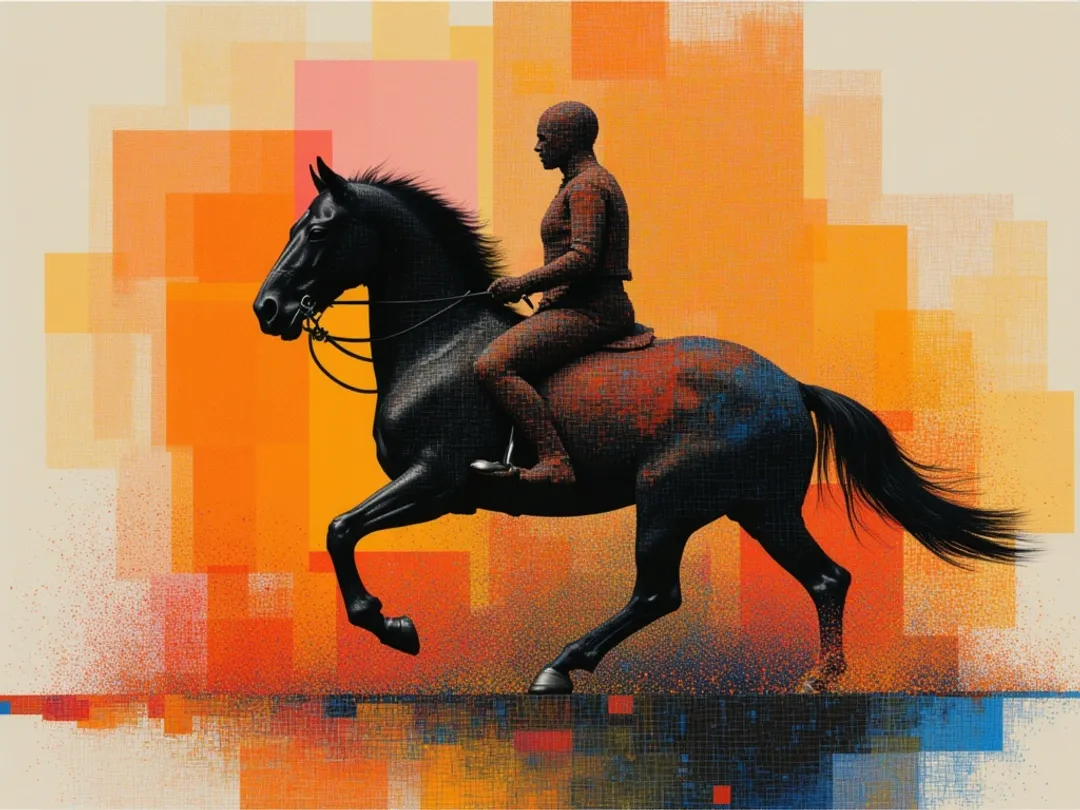

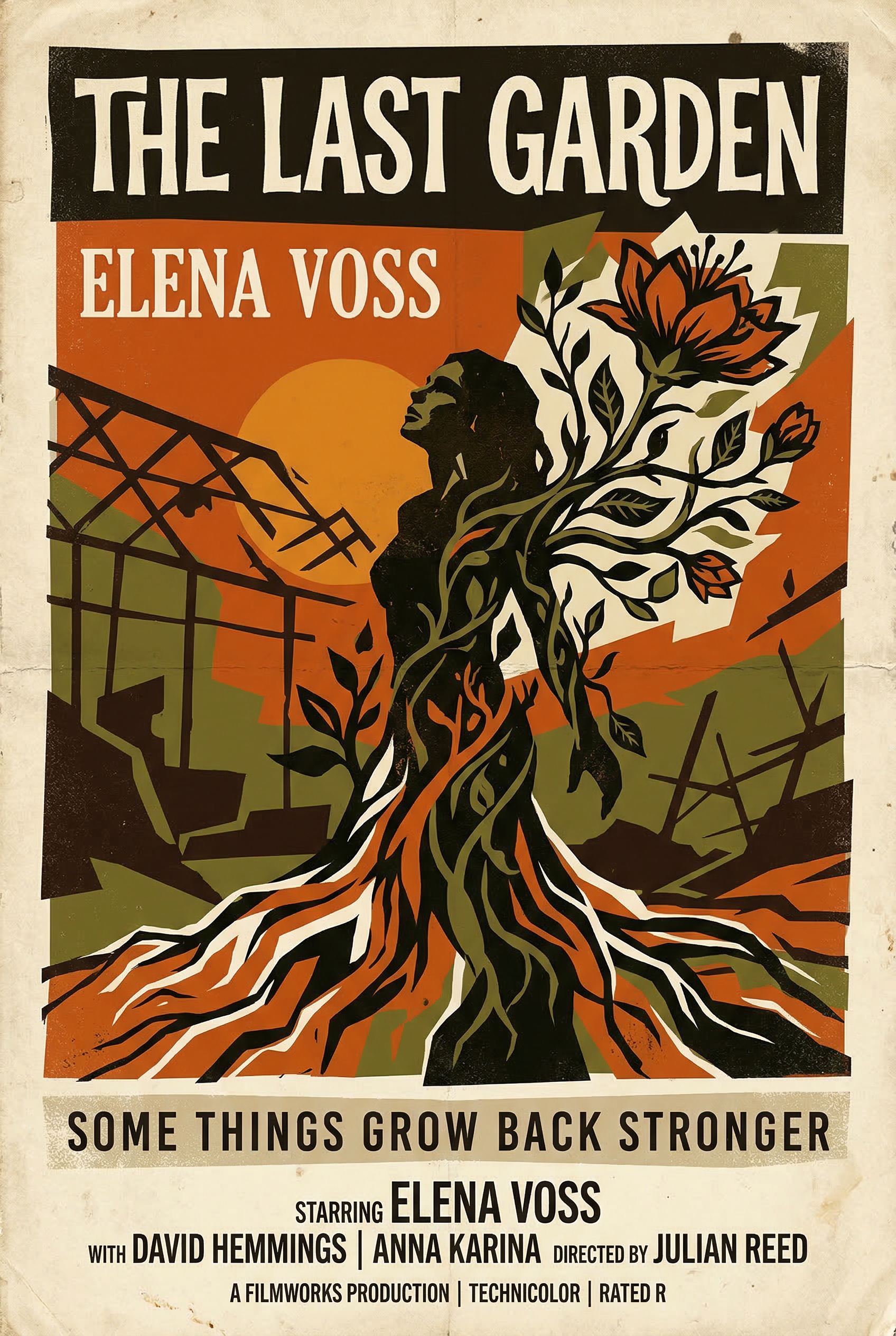

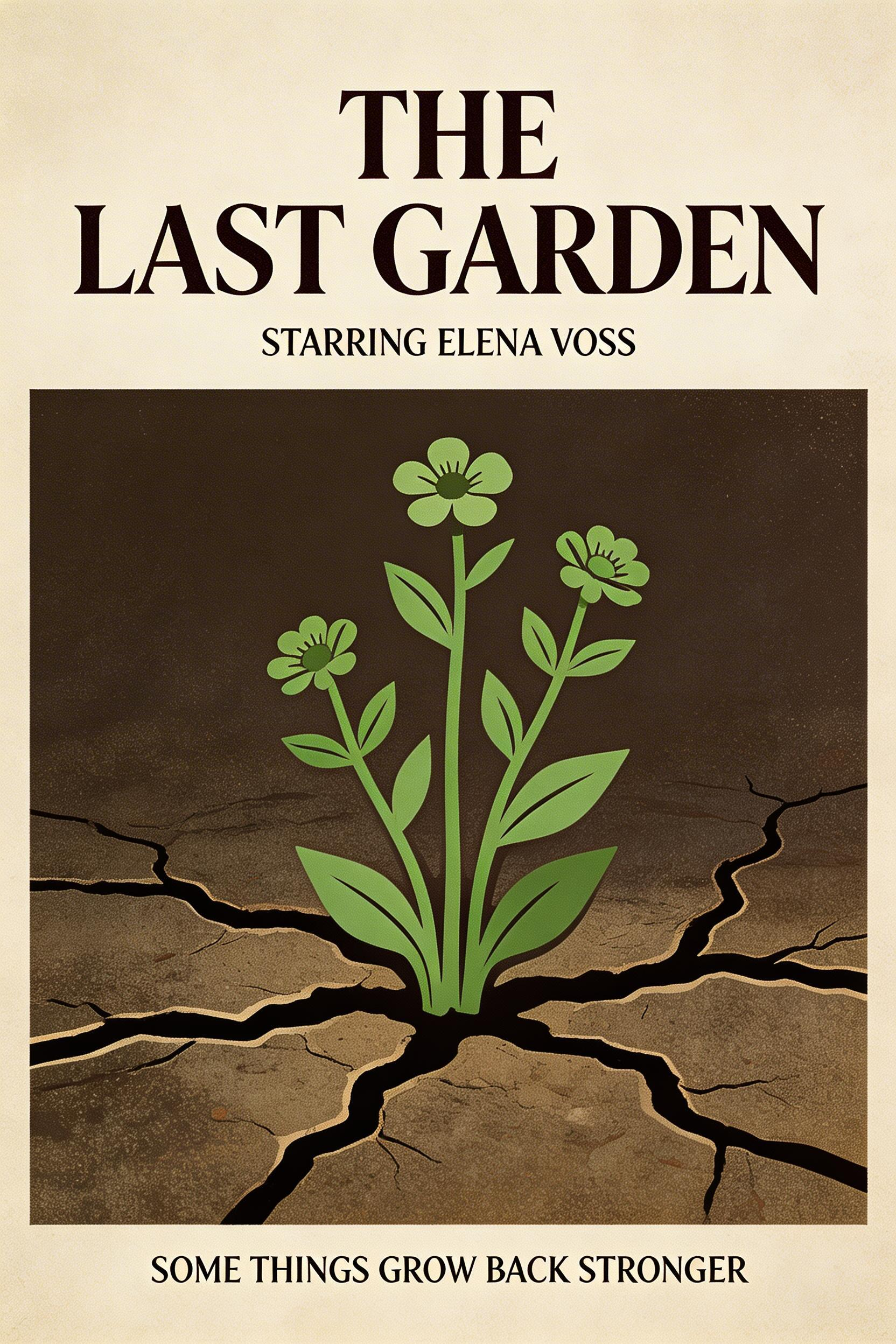

Test 1: Text Rendering

Prompt: "A vintage movie poster for a film called 'The Last Garden' starring Elena Voss, with tagline 'Some things grow back stronger' in serif typography, 1970s Saul Bass-inspired design"

Nano Banana 2:

Generated using Nano Banana 2 on fal, an AI model from Google.

Seedream 5.0 Lite:

Generated using Seedream 5.0 Lite on fal, an AI model from ByteDance.

Test 2: Product Photography

Prompt: "A matte black ceramic coffee mug on a sunlit marble countertop, morning light streaming through linen curtains, a small succulent in the background slightly out of focus, editorial product photography style"

Nano Banana 2:

Generated using Nano Banana 2 on fal, an AI model from Google.

Seedream 5.0 Lite:

Generated using Seedream 5.0 Lite on fal, an AI model from ByteDance.

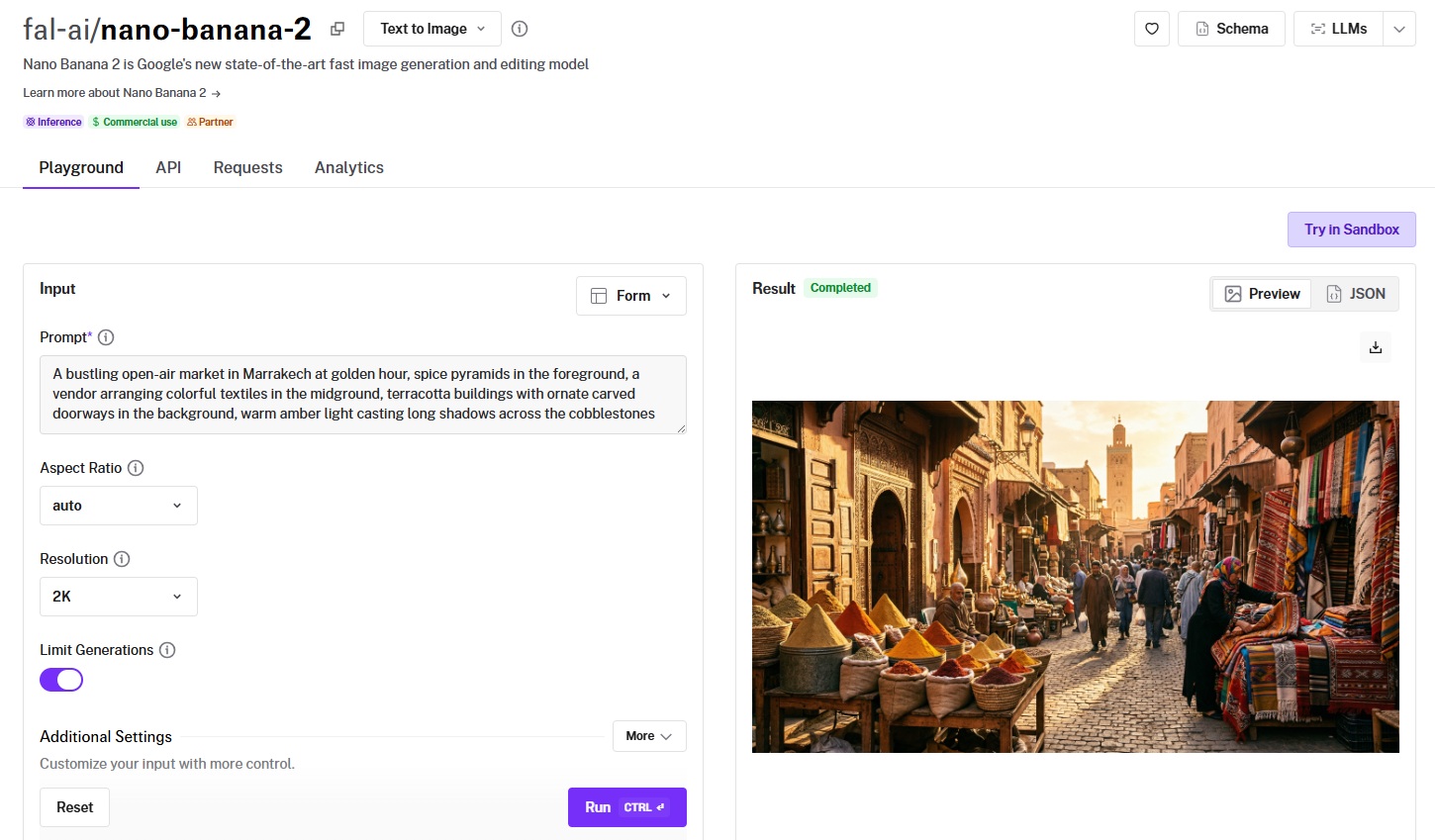

Test 3: Complex Multi-Element Scene

Prompt: "A bustling open-air market in Marrakech at golden hour, spice pyramids in the foreground, a vendor arranging colorful textiles in the midground, terracotta buildings with ornate carved doorways in the background, warm amber light casting long shadows across the cobblestones"

Nano Banana 2:

Generated using Nano Banana 2 on fal, an AI model from Google.

Seedream 5.0 Lite:

Generated using Seedream 5.0 Lite on fal, an AI model from ByteDance.

Pricing: The Math That Matters

The per-image cost difference between Nano Banana and Seedream is significant.

Seedream is the cheaper family across every tier, and the gap widens at scale.

Nano Banana pricing on fal

- Nano Banana (original, Gemini 2.5 Flash Image): $0.039/image. The original model with a single resolution tier and straightforward flat pricing.

- Nano Banana 2 (Gemini 3.1 Flash Image): $0.08/image at 1K resolution (standard). 0.5K (512px) outputs cost $0.06/image (0.75x rate). 2K outputs cost $0.12/image (1.5x rate). 4K outputs cost $0.16/image (2x rate). Web search grounding adds $0.015 per generation if enabled. High thinking mode adds $0.002 per generation if enabled.

- Nano Banana Pro (Gemini 3 Pro Image): $0.15/image at 1K resolution. 4K outputs cost $0.30/image (2x rate). Web search grounding adds $0.015 per generation if enabled.

Seedream pricing on fal

- Seedream 3.0: $0.03/image (text-to-image only, no editing endpoint). Bilingual Chinese-English generation with resolution up to 2K.

- Seedream 4.0: $0.03/image (text-to-image), $0.03/image (editing). Unified generation and editing at the same price point as v3.0.

- Seedream 4.5: $0.04/image (text-to-image), $0.04/image (editing). Resolution up to 4096x4096 (16.8MP), with up to 10 reference images for editing.

- Seedream 5.0 Lite: $0.035/image (text-to-image), $0.035/image (editing). Resolution up to 3072x3072 (9MP).

What this looks like at scale

For a team generating 1,000 images per month at standard resolution: Seedream 5.0 Lite costs $35. Nano Banana 2 costs $80. That's a $45 difference per month.

At 10,000 images per month, Seedream 5.0 Lite costs $350. Nano Banana 2 costs $800. The gap grows to $450 per month.

But if that same team routes quick drafts through Seedream 3.0 at $0.03/image and only sends final hero assets to Nano Banana 2 at $0.08/image, the blended cost drops considerably.

A 70/30 split of Seedream 3.0 and Nano Banana 2 across 10,000 images comes to roughly $450, nearly half of Nano Banana 2's flat $800.

A practical approach: You can use Seedream 3.0 ($0.03/image) or Seedream 5.0 Lite ($0.035/image) for rapid prompt iteration and volume work, then route hero assets through Nano Banana 2 ($0.08/image) or Nano Banana Pro ($0.15/image) when you need web-grounded visuals or deep compositional reasoning.

How Is Seedream Different from Nano Banana?

Beyond the core architectural split, several capabilities are implemented differently or exist in only one family.

Nano Banana 2's thinking mode

Nano Banana 2 offers two thinking levels: minimal and high.

These let you dial between speed and reasoning depth within the same model, without switching endpoints.

Minimal thinking can prioritize faster generation for straightforward prompts, while high thinking spends more time reasoning about complex compositions, spatial relationships, and text rendering accuracy.

High thinking adds $0.002 per generation, a negligible cost that can improve output quality on challenging prompts.

Nano Banana's web search grounding

Both Nano Banana 2 and Nano Banana Pro support optional web search grounding through the enable_web_search parameter.

This lets the model pull in real-time information from the web during generation, which means you can generate images that reference current events, real products, or factual content without manually describing everything in the prompt.

No Seedream model offers this capability.

Nano Banana's character consistency

Nano Banana 2 and Nano Banana Pro both maintain character consistency for up to 5 people across generations without fine-tuning.

This makes them well-suited for storyboarding, campaign work, or any workflow where the same characters need to appear across multiple images.

Seedream's multi-image editing workflows (in v4.0, v4.5, and v5.0 Lite) preserve subject details when you supply reference images, but that's designed for editing with existing source material rather than generating consistent characters from text prompts alone.

Seedream's resolution range

Seedream 4.5 supports output up to 4096x4096 (16.8MP), the highest native resolution across either family.

For comparison, Nano Banana 2 and Nano Banana Pro max out at 4K resolution, and Seedream 5.0 Lite caps at 3072x3072 (9MP).

If you need the absolute largest output without a separate upscaling step, Seedream 4.5 at $0.04/image covers the most ground.

Seedream's batch generation

Seedream models support up to 6 images per generation request, compared to Nano Banana's 4.

On top of that, Seedream's max_images parameter lets each generation potentially return multiple images, giving you more variations per API call.

For workflows that depend on exploring many variations quickly, this is a meaningful throughput advantage.

Seedream's unified generation and editing architecture

Starting with Seedream 4.0, ByteDance unified text-to-image generation and image editing into a single model.

This means the same architecture handles both creation and modification.

Nano Banana also offers both text-to-image and editing endpoints, but these run on separate API paths rather than a single unified model.

Output format flexibility

Nano Banana models support PNG, JPEG, and WebP output formats, giving you control over file size and quality trade-offs for different delivery contexts.

Seedream models on fal output PNG only. If your pipeline requires JPEG or WebP output directly from generation, you'll need Nano Banana or a conversion step after Seedream.

Nano Banana 2 also supports extreme aspect ratios (4:1, 1:4, 8:1, 1:8) that neither Nano Banana Pro nor any Seedream model offers, which matters for banner ads, vertical stories, and panoramic content.

How to Run Both Model Families on fal

You can run every Nano Banana and Seedream model through fal's API or test them in the playground at fal.

Same integration pattern across all models. If you've already integrated one, switching to any other is a one-line endpoint change.

Here's what the code looks like in JavaScript:

import { fal } from "@fal-ai/client";

// Nano Banana 2

const nbResult = await fal.subscribe("fal-ai/nano-banana-2", {

input: {

prompt: "A ceramic coffee mug on a marble countertop, morning light",

aspect_ratio: "4:3",

output_format: "png",

},

});

// Seedream 5.0 Lite --- same pattern, different endpoint

const sdResult = await fal.subscribe(

"fal-ai/bytedance/seedream/v5/lite/text-to-image",

{

input: {

prompt: "A ceramic coffee mug on a marble countertop, morning light",

image_size: "auto_2K",

},

}

);

Both families work through the same fal API infrastructure.

That means you can build a routing system where budget-sensitive generation goes to Seedream and semantic-heavy requests go to Nano Banana with nothing but a string swap and minor input adjustments.

Note that the input schemas differ slightly between families. Nano Banana uses aspect_ratio and output_format parameters, while Seedream uses image_size presets.

But the overall integration pattern, auth, queue handling, webhook support, and response format, all stay identical across both families on fal.

Every model also has a playground on fal, where you can test prompts in your browser before writing any code.

When to Use Which: A Decision Framework

Rather than declaring a winner, here's how I'd think about routing between the two families.

Choose Seedream when

- You're generating at scale and cost per image is the primary constraint.

- Your workflow involves e-commerce product mockups, marketing assets, or creative advertising where visual polish matters more than deep semantic reasoning.

- You need bilingual Chinese-English text rendering in generated images.

- You want the highest native resolution in either family (Seedream 4.5 at 4096x4096).

- You're compositing multiple source images into a single output and need up to 10 reference images per edit.

Choose Nano Banana when

- Your prompts involve complex compositional reasoning where the model needs to understand intent, not just match keywords.

- You need consistent characters across multiple generations for storyboarding or campaign sequences.

- Your workflow benefits from web-grounded generation where images reference real-time information.

- You want to dial reasoning depth per prompt using thinking modes rather than switching models.

- You're building an interactive editing workflow that needs up to 14 reference images per request.

Use both

Use both when you want to route volume work and rapid iteration through Seedream at $0.03-$0.04/image, then selectively escalate assets that need deeper reasoning, character consistency, or web grounding to Nano Banana at $0.08-$0.15/image.

Since both families run on fal's infrastructure with the same API patterns, this routing logic takes minutes to implement.

Recently Added

Run Nano Banana and Seedream on fal

The AI image generation space has more capable models now than at any point in the past two years.

And that's actually the challenge: picking the right one for each use case requires testing, which costs time and credits.

If you want access to both Nano Banana and Seedream through a single API with pay-per-use pricing and no GPU management, fal is the fastest way to get started.

Test any model from either family in the playground or plug into the API in minutes.

![Outpainting generation with FLUX.2 [pro] from Black Forest Labs. Optimized for maximum quality, exceptional photorealism and artistic images.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a9a3cce%2F-REF_qGgpSuwSJ0NGjEYo_d68ad106f4174e0d8fb68c3551e6bc86.jpg/tr:w-1920,q-80/-REF_qGgpSuwSJ0NGjEYo_d68ad106f4174e0d8fb68c3551e6bc86.webp)