fal gives you access to every top AI video model through a single API with pay-per-use pricing; Veo 3.1, Sora 2 Pro, and Kling 3 Pro lead the pack for quality and capability.

In this guide, I'll review the 10 best AI video generators in 2026, covering motion quality, output resolution, generation speed, pricing, and what each model is best for, so you don't have to burn cash on trial and error.

What Factors Should Be Considered When Evaluating AI Video Generators?

Motion Quality and Temporal Consistency

This is the first thing I checked for every model on this list. Can it generate motion that looks natural across the entire clip, or does it fall apart after the first two seconds? I paid attention to physics accuracy, object permanence, and how well characters maintain their appearance from frame to frame. Some models produce gorgeous opening frames but devolve into warped faces and melting limbs by the end of the clip. Others stay rock-solid for the full duration but produce stiff, robotic movement.

Note: I'm going to compare all AI video generation models with the same prompt so that we can see the difference:

Create a 5-second cinematic slow-motion video of a golden retriever leaping into a lake at sunset. Low-angle tracking shot follows the jump. Warm amber lighting, shallow depth of field, water droplets frozen mid-splash. On-screen text: Display centered white text reading "Pure Joy" from second 1 to second 4. Text must be sharp, stable, correctly spelled, and readable. Audio: Natural splash sound synced to water impact, with soft distant birds. Add subtle cinematic background music. Optional calm voiceover saying: "Pure joy, captured in a moment."

Output Resolution and Length

Not all models handle high resolution well. And clip duration varies wildly. Some cap out at 5 seconds in 720p. Others push to 25 seconds at 1080p or even 4K. If you're producing content for social media, a 5-second 720p clip might work. But if you're generating product videos, ad creatives, or anything that needs to hold up on a large screen, resolution and duration matter a lot.

Generation Speed and Cost

Video generation is slow by nature. Most models take 2-6 minutes per clip, and some of the premium ones push past that. When you're iterating on a creative concept and generating 20 variations to find the right one, that time adds up fast. And so does the cost. I compared per-second pricing across all models, factoring in resolution tiers and audio support.

A 10-second clip can cost anywhere from $1.00 on Wan 2.2 (at 720p) to $5.00 on Sora 2 Pro (at 1080p). That's a 5x difference.

Control Options

Can you control camera movement? Can you specify a start frame and an end frame? Does the model support reference images for character consistency across clips? These features separate "toy demo" generators from production-ready tools. I tested each model's ability to follow complex prompts with specific motion instructions, camera angles, and scene transitions.

Audio and Lip-Sync Support

This is the newest battleground in AI video. A year ago, every model produced silent clips. Now, the top-tier models generate synchronized audio, dialogue, environmental sounds, and ambient noise directly from the text prompt. If your workflow requires talking-head content, product ads with voiceover, or cinematic scenes with dialogue, native audio support saves you from stitching a separate audio track after the fact.

What Are The Best AI Video Generators in 2026?

The best AI video generators in 2026 are fal, Veo 3.1, and Sora 2 Pro.

Here's my shortlist of the 10 best AI models I reviewed:

| AI Video Generators | Best For | Price to Use |

|---|---|---|

| fal | Teams and developers who need access to every top video model through a single, fast API with pay-per-use pricing | Pay-per-use. For example, pricing starts at $0.035 per video second with Vidu Q3. |

| Veo 3.1 | Production teams that need the highest-quality output with native audio, 4K support, and reference-to-video capabilities | From $0.10/second on fal (Fast, no audio). |

| Sora 2 Pro | Filmmakers and content creators who need the longest clips (up to 25 seconds) with synchronized dialogue | $0.30/second (720p) to $0.50/second (1080p) on fal. |

| Kling 3 Pro | Teams that want cinematic motion quality at a mid-tier price with strong prompt adherence | $0.224 (audio off) or $0.28 (audio on)/second on fal. |

| Hailuo 2.3 Pro | Teams that need reliable, fast video generation with budget and premium quality tiers | $0.49 per video generation on fal. |

| Wan 2.2 A14B | Budget-conscious teams and open-source advocates who want solid quality at the lowest cost | $0.1/second on fal. |

| Seedance 1.5 Pro | Teams that need audio-enabled video generation with start and end frame control | Each 720p 5-second video with audio costs roughly $0.26 on fal. |

| PixVerse v5.5 | Creators focused on stylized content, visual effects, and transitions | For a 5-second video, it will cost $0.15 for 360p and 540p, $0.2 for 720p and $0.4 for 1080p on fal. |

| Kling 3.0 Pro | Teams that want the latest Kling model with native audio and multi-shot support | For every second of video you generate, you will be charged $0.224 with audio off or $0.336 with audio on on fal. |

| Vidu Q3 | Teams exploring newer models with Pro and Turbo speed tiers | $0.035 per video second for 360p and 540p, but cost will be 2.2x for 720p and 1080p resolution on fal. |

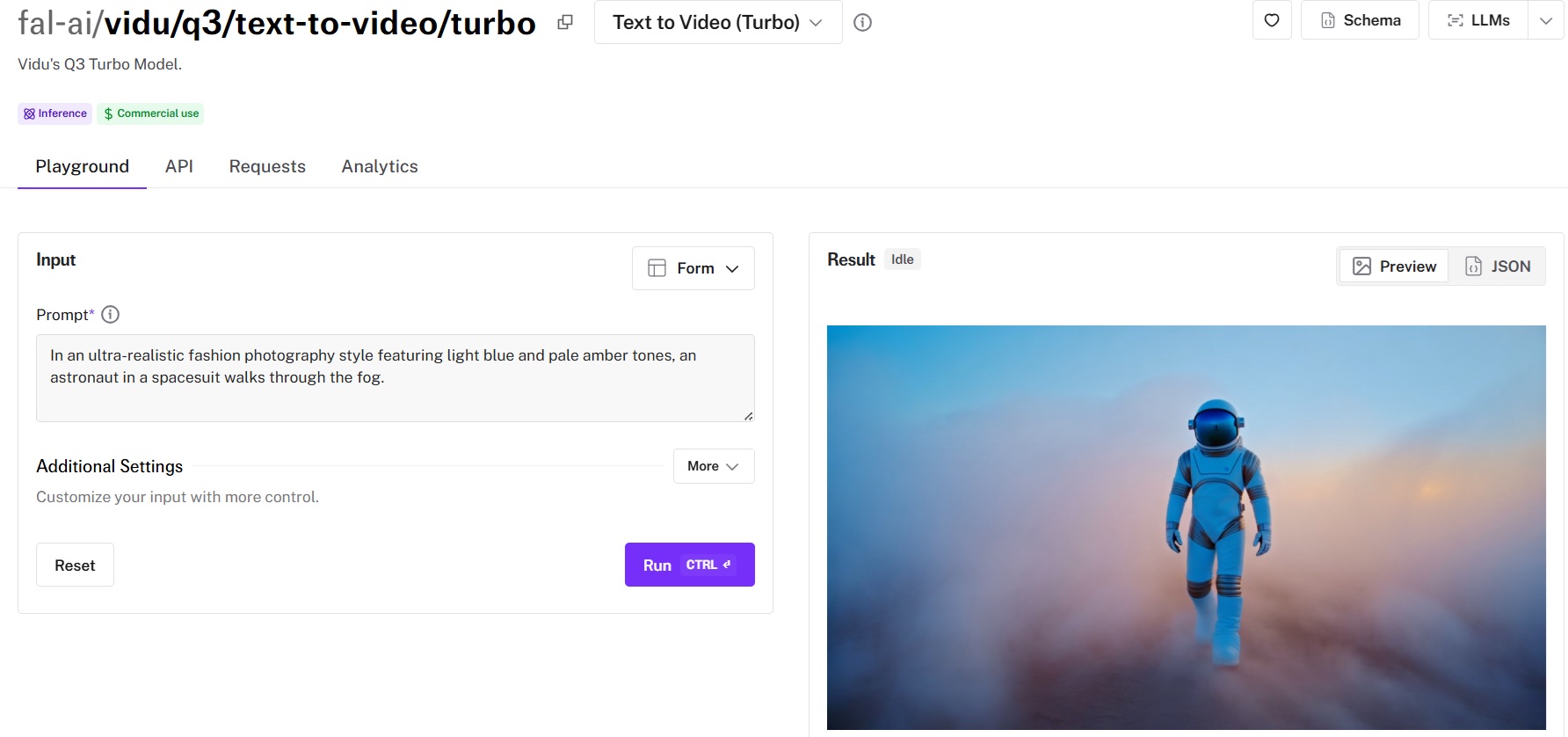

fal

fal.ai (that's us) is the best place to generate AI video in 2026 because you get access to every major video model through a single API, with pay-per-use pricing and zero GPU management.

Full disclosure: Even though fal is our platform, I'll provide an unbiased perspective on why it's the best option for AI video generation in 2026.

Right now, if you want to test Veo 3.1, Kling 2.5, Sora 2 Pro, and Wan 2.2 side by side, you'd normally need four separate accounts, four billing setups, and four different integration patterns. With fal, you integrate once. One API key, one billing dashboard, one code pattern. Changing models is literally swapping an endpoint string.

But model access alone isn't why fal takes the #1 spot. Speed is. fal's inference engine was built from scratch with custom CUDA kernels tuned to specific model architectures, not layered on top of general-purpose frameworks the way most platforms do it. Cold starts land between 5 and 10 seconds on fal, compared to 20 to 60 seconds (or more) on alternatives.

Here are the things that make fal the best platform for AI video generation.

One API for Every Video Model You Need

You don't need separate integrations with Google DeepMind, OpenAI, Kuaishou, MiniMax, ByteDance, or PixVerse. You integrate with fal, and all of them are there. The same API pattern works across all 1,000+ models on the platform. Your auth, error handling, queue logic, and billing stay identical whether you're generating with Veo 3.1 for cinematic 4K output, Kling 2.5 Turbo Pro for mid-range production, or Wan 2.2 for quick budget drafts.

In practice, this means you can ship a feature using Wan 2.2 for fast previews, let users upgrade to Veo 3.1 for polished final output, and add Sora 2 Pro for audio-enabled clips, all without rewriting your integration.

When a new model launches, fal typically has it running on day one.

Generated using Kling 2.5 Turbo Pro on fal.

A few lines of code to get started:

import { fal } from "@fal-ai/client";

const result = await fal.subscribe(

"fal-ai/kling-video/v2.5-turbo/pro/text-to-video",

{

input: {

prompt:

"A golden retriever leaping into a lake at sunset, cinematic slow motion",

},

}

);

Every model also has a playground where you can test it in your browser before writing any code.

Speed That Actually Matters for Production

fal's engineering team writes custom CUDA kernels for individual model architectures and uses techniques like epilogue fusion to cut out unnecessary memory transfers between GPU operations.

The infrastructure handles scaling on its own: regional GPU routing sends requests to the nearest cluster, a custom CDN delivers generated content with minimal latency, and capacity expands from zero to thousands of GPUs based on demand. No configuration on your end.

For teams building real-time features like interactive video editors, dynamic ad generation, or live preview workflows, this gap decides whether users stick around or leave.

Pay-Per-Use Pricing With No Idle Costs

fal charges per video second. You don't reserve GPU capacity, you don't pay when your app is idle, and you don't estimate demand in advance.

Pricing starts at $0.28 per 6-second video generation for Hailuo 2.3 Standard and goes up to $0.50/second for Sora 2 Pro at 1080p. That range means you pick the right model for the job and only pay for what you actually generate.

No hidden fees for API calls, storage, or CDN delivery. You pay for generation and computing. That's it.

Pricing

fal uses pay-as-you-go pricing with no subscriptions or minimum commitments.

Here's a snapshot of video generation costs:

- Wan 2.2 A14B: $0.1/second.

- Hailuo 2.3 Standard (768p): $0.28 per 6-second video generation.

- Wan 2.5: $0.05/second for 480p.

- Kling 2.5 Turbo Pro: $0.07/second.

- Hailuo 2.3 Pro (1080p): $0.49 per video generation.

- Veo 3.1 Fast (720p): $0.10/second and $0.30/second for 4K.

- Sora 2 Pro: $0.30/s for 720p and $0.50/s for 1080p.

- Veo 3.1: $0.20 without audio or $0.40 with audio for 720p or 1080p per second.

Pros & Cons

Pros:

- Every model on this list is available through one API, one billing account, and one integration.

- Fastest cold starts in the market at 5-10 seconds, built on custom CUDA kernels.

- True pay-per-use with no idle costs, subscriptions, or minimums.

- SOC 2 compliant.

Cons:

- Per-second pricing adds up fast for casual or low-volume use compared to running open-source models on your own hardware.

- No IP indemnity on generated content, so teams that need legal coverage for outputs will have to handle that separately.

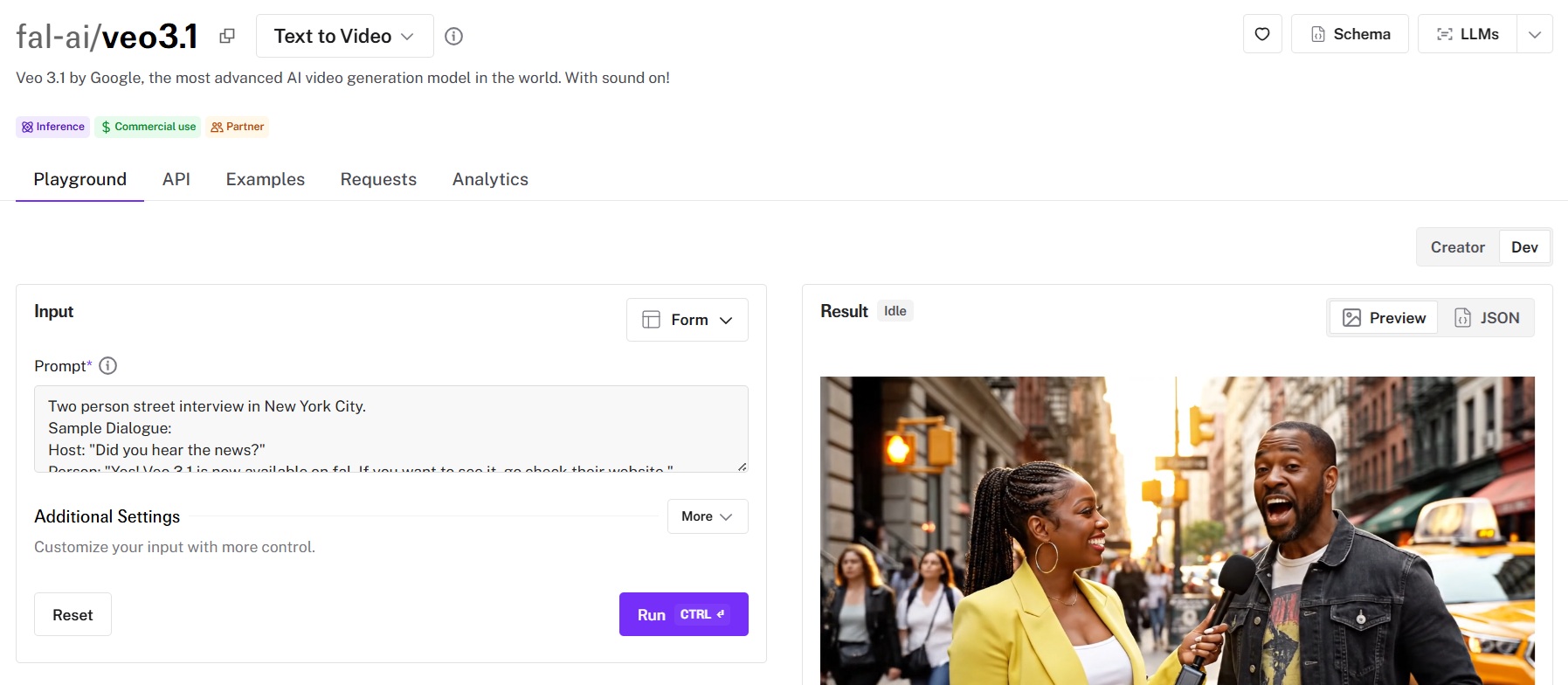

Veo 3.1

Best for: Production teams that need the highest-quality video output with native audio, 4K resolution, and multi-reference character consistency.

Similar to: Veo 3, Sora 2 Pro.

Veo 3.1 is Google DeepMind's latest video generation model. And the jump from Veo 3 isn't incremental. Native audio synthesis is back (dialogue, environmental sounds, ambient noise), but now you also get 4K output, reference-to-video for character consistency, and first-last-frame-to-video for precise scene control.

Performance

Generated using Veo 3.1 on fal, an AI video model from Google DeepMind: $2.40 total cost.

- Motion quality and consistency: Characters maintain their identity frame-to-frame, physics look natural, and complex motion like splashing water, flowing fabric, and animal movement holds up through the full clip.

- Output resolution and length: Up to 4K, which is a first for this category. Standard clips run at 720p or 1080p with options to push higher. Duration is from 4 to 8 seconds.

- Generation speed and cost: The full-quality model runs $0.20/second without audio and $0.40/second with audio at 720p-1080p. At 4K, it jumps to $0.40/second without audio and $0.60/second with. A single 8-second clip at full quality with audio costs $4.80, so this is a premium option.

- Camera and scene control: First-last-frame-to-video is a standout. You provide a start frame and an end frame, and the model animates the transition between them. Reference-to-video lets you supply multiple character images to maintain identity across clips.

- Audio and lip-sync support: Native audio generation with synchronized dialogue, environmental sounds, and ambient noise.

How to Run Veo 3.1 on fal

You can run Veo 3.1 through fal's API or test it in the playground at fal.

Multiple endpoints are available: text-to-video, image-to-video, reference-to-video, and first-last-frame-to-video, each with both standard and Fast variants. Same integration pattern as every other model on fal. If you've already integrated Kling or Sora, switching to Veo 3.1 is a one-line endpoint change.

Pricing

Here's how much it costs to run Veo 3.1 on fal:

- Veo 3.1 (720p-1080p, no audio): $0.20/second.

- Veo 3.1 (720p-1080p, with audio): $0.40/second.

- Veo 3.1 (4K, no audio): $0.40/second.

- Veo 3.1 (4K, with audio): $0.60/second.

Pros & Cons

Pros:

- Highest resolution ceiling at 4K, the only model on this list that goes beyond 1080p.

- Reference-to-video and first-last-frame control give you more precise scene direction than any other model listed here.

- Native audio with improved lip-sync over Veo 3.

- Fast variant cuts cost roughly in half while maintaining strong quality.

Cons:

- Full-quality 4K with audio is the most expensive per-second option at $0.60/second.

- Google's content safety filters are more conservative than other models on this list, which may require prompt adjustments for certain use cases.

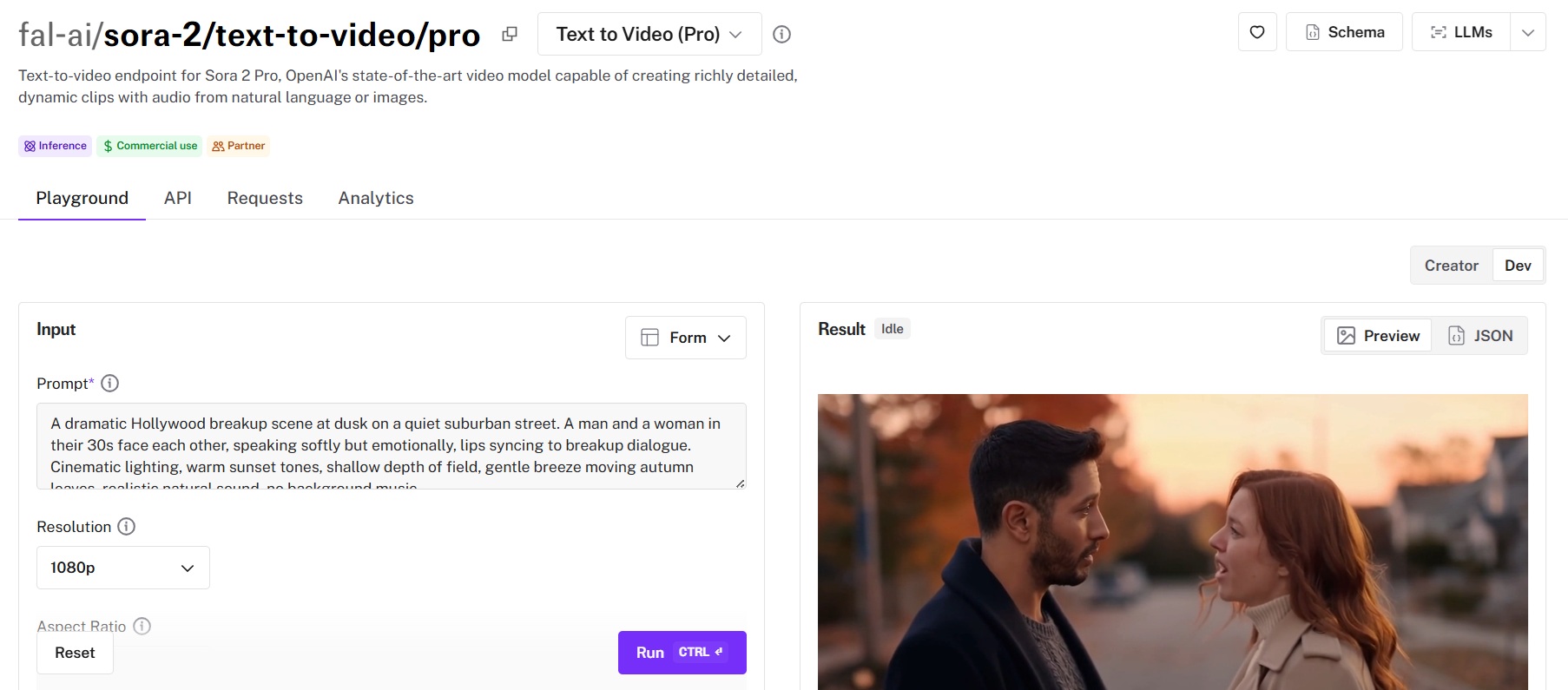

Sora 2 Pro

Best for: Filmmakers and content creators who need the longest AI-generated clips (up to 25 seconds) with synchronized dialogue and environmental audio.

Similar to: Veo 3.1, Veo 3.

Sora 2 Pro is OpenAI's premium video generation model, and its biggest advantage is something no other model on this list can match: duration. While most competitors cap out at 5-10 seconds per clip, Sora 2 Pro pushes to 25. That's a full scene, not a snippet.

It also generates synchronized audio from the same text prompt. Dialogue lip-syncing, ambient sounds, footsteps, and wind.

Performance

Generated using Sora 2 Pro on fal, an AI video model from OpenAI.

- Motion quality and consistency: Strong across shorter clips (4-8 seconds), with visual coherence that holds well for narrative content.

- Output resolution and length: 720p and 1080p, with clips up to 25 seconds. Standard duration options are 4, 8, and 12 seconds, with extended mode pushing past that to reach 25 seconds. Aspect ratios cover 9:16 and 16:9 for social and cinematic formats.

- Generation speed and cost: $0.30/second at 720p. $0.50/second at 1080p. A 10-second 1080p clip costs $5.00. A full 25-second clip at 1080p runs $12.50.

- Camera and scene control: Prompt-based camera control works well for establishing shots and simple tracking movements. The image-to-video endpoint accepts a reference image for scene grounding. A video-to-video remix endpoint lets you restyle existing footage while preserving motion structure.

- Audio and lip-sync support: Native audio generation with dialogue lip-syncing, environmental sounds, and ambient audio.

How to Run Sora 2 Pro on fal

Available through fal's API and playground at fal.

Three endpoints: text-to-video, image-to-video, and video-to-video remix. If you have an OpenAI API key, you can pass it directly and get charged by OpenAI. Otherwise, you'll be charged fal credits at the listed per-second rates.

Pricing

Here's how much it costs to run Sora 2 Pro on fal:

- Sora 2 Pro (720p): $0.30/second.

- Sora 2 Pro (1080p): $0.50/second.

Pros & Cons

Pros:

- Up to 25-second clips, roughly 2.5x longer than any other model on this list.

- Native audio with dialogue lip-syncing and environmental sounds from a single text prompt.

- Video-to-video remix lets you restyle existing footage while preserving motion structure.

Cons:

- Most expensive per-second pricing here. A 10-second 1080p clip costs $5.00, and a 25-second clip costs $12.50.

- Longer clips (20-25 seconds) trade some temporal coherence for duration, particularly in complex multi-character scenes.

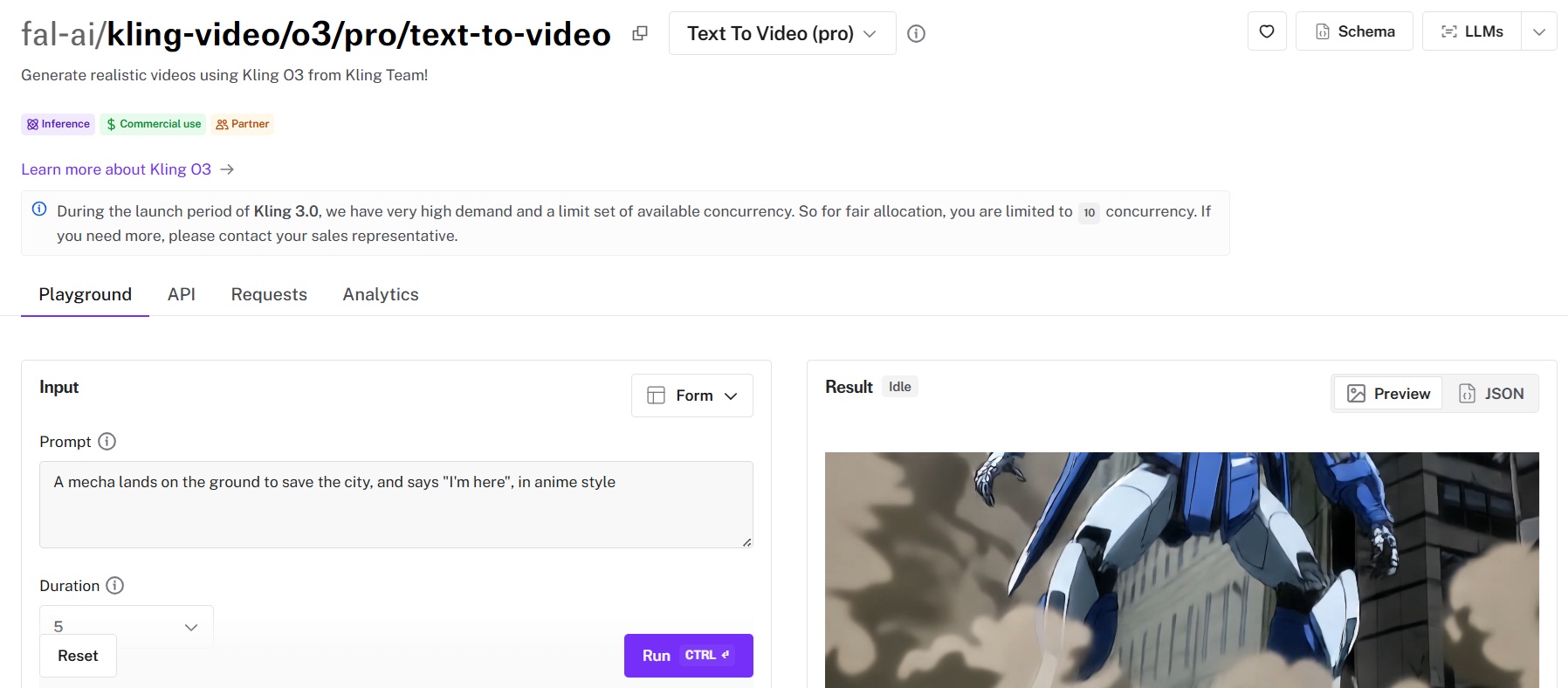

Kling 3 Pro (o3)

Best for: Production teams that want cinematic motion quality with strong prompt precision at a mid-tier price point.

Similar to: Kling 2.1 Pro, Hailuo 2.3 Pro.

Kling 3 Pro is Kuaishou's high-end video generation model that adds audio and the ability to add voice IDs. What Kling has consistently done better than most competitors is motion fluidity. Characters move naturally. Camera pans feel cinematic. Action sequences maintain their physics longer than you'd expect at this price tier.

Performance

Generated using Kling 3 Pro on fal, an AI video model from Kuaishou.

- Motion quality and consistency: Excellent fluidity with natural physics and stable character consistency across the full clip duration. Kling's strength has always been motion, and this iteration maintains that edge.

- Output resolution and length: Up to 1080p, from 3-second to 15-second clip options. Resolution quality holds well at 1080p, with Veo 3.1 being the only model on this list that pushes into 4K territory.

- Generation speed and cost: For every second of video you generate, you will be charged $0.224 (audio off) or $0.28 (audio on). For example, a 10-second video with audio on will cost $2.8.

- Camera and scene control: Supports start-frame and tail-frame image inputs for scene grounding.

- Audio and lip-sync support: As the newest Kling model, it supports native audio.

How to Run Kling 3 Pro on fal

Available through fal's API and playground at fal. Text-to-video and image-to-video endpoints are both live.

Supports tail image input for end-frame control, negative prompts for avoiding unwanted elements, and cfg_scale tuning for motion intensity. Same integration pattern as every model on fal.

Pricing

Here's how much it costs to run Kling 3 Pro (o3) on fal:

- Per second (audio off): $0.224.

- Per second (audio on): $0.28.

- 10-second video (audio on): $2.80.

Pros & Cons

Pros:

- Good motion fluidity.

- Audio generation, so you wouldn't need a separate model for sound.

- Start-frame and tail-frame inputs for precise scene control.

Cons:

- Prioritizes motion and audio capabilities over on-screen text rendering.

- Maxes out at 1080p, which covers most production needs. For 4K, Veo 3.1 is the only option on this list that goes higher.

falMODEL APIs

The fastest, cheapest and most reliable way to run genAI models. 1 API, 100s of models

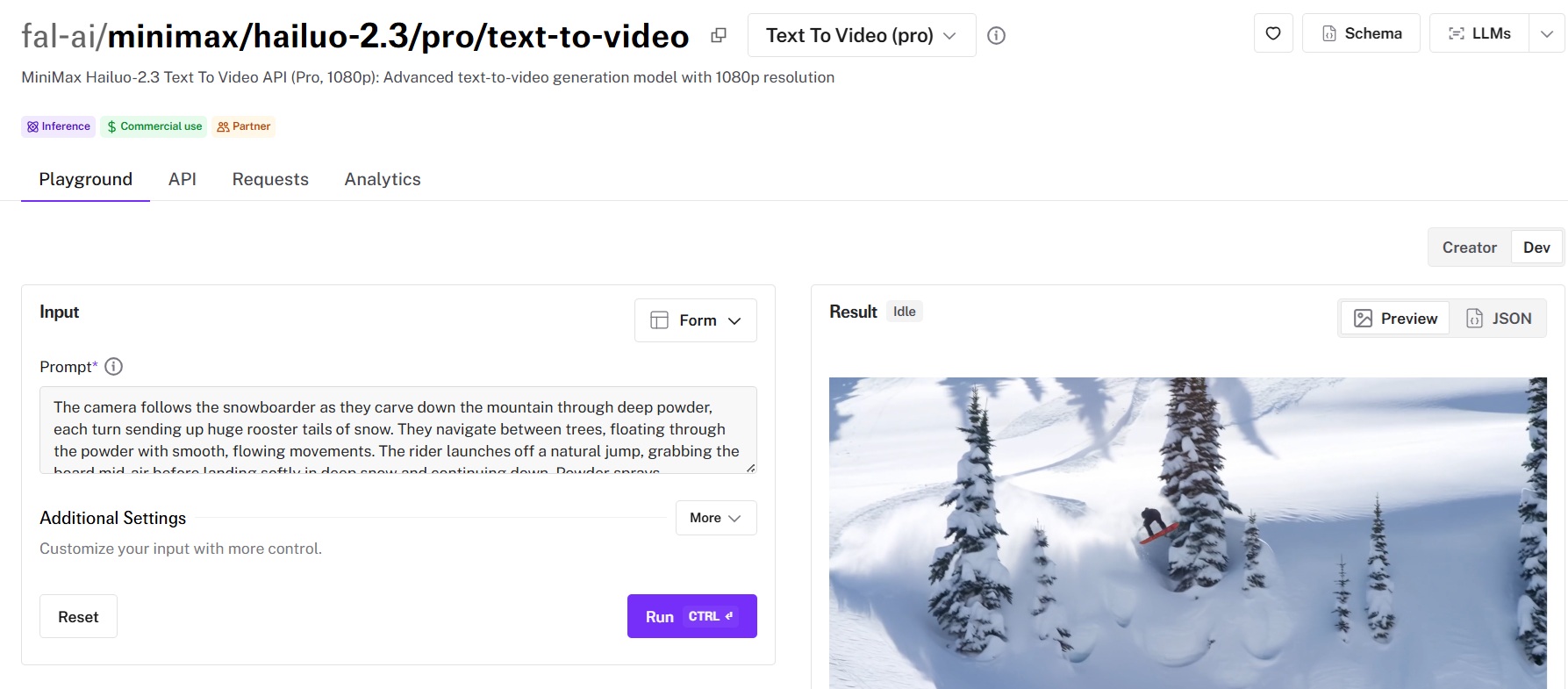

Hailuo 2.3 Pro

Best for: Teams that need reliable, fast image-to-video generation with both budget and premium quality tiers at competitive pricing.

Similar to: Kling 2.5 Turbo Pro, Wan 2.2.

Hailuo 2.3 comes in four variants: Standard (768p), Pro (1080p), and Fast versions of each. It's one of the most straightforward models to work with. Upload an image, write a motion description, and get a clean fluid animation back.

Performance

Generated using Hailuo 2.3 Pro on fal, an AI video model from MiniMax.

- Motion quality and consistency: Natural motion synthesis that preserves the source image's details and colors throughout the clip.

- Output resolution and length: Standard tier runs at 768p, Pro at 1080p. Both support text-to-video and image-to-video. Clip duration typically runs around 6 seconds.

- Generation speed and cost: Using the video generator costs $0.49 per video generation on fal.

- Camera and scene control: Supports start and end image inputs for controlled animation between two frames. Motion description prompts are followed reliably.

- Audio and lip-sync support: Hailuo 2.3 focuses on visual generation. You can pair it with an external audio model for sound.

How to Run Hailuo 2.3 on fal

You can run Hailuo 2.3 through fal's API or test it in the playground at fal.

Four variants are available: Standard and Pro, each with a regular and Fast option. Text-to-video and image-to-video endpoints are both live. Same integration pattern as everything else on fal.

Pricing

Here's how much it costs to run Hailuo 2.3 Pro on fal: $0.49 per video generation.

Pros & Cons

Pros:

- Reliable, consistent output across generations, great for volume production.

- Text on screen is solid.

- End image support for controlled animation between two frames.

Cons:

- Focused on visual generation, so you'll pair it with MMAudio or similar for sound.

- Prioritizes consistency and reliability over the cinematic motion quality that Kling 2.5 Turbo Pro delivers for complex movement.

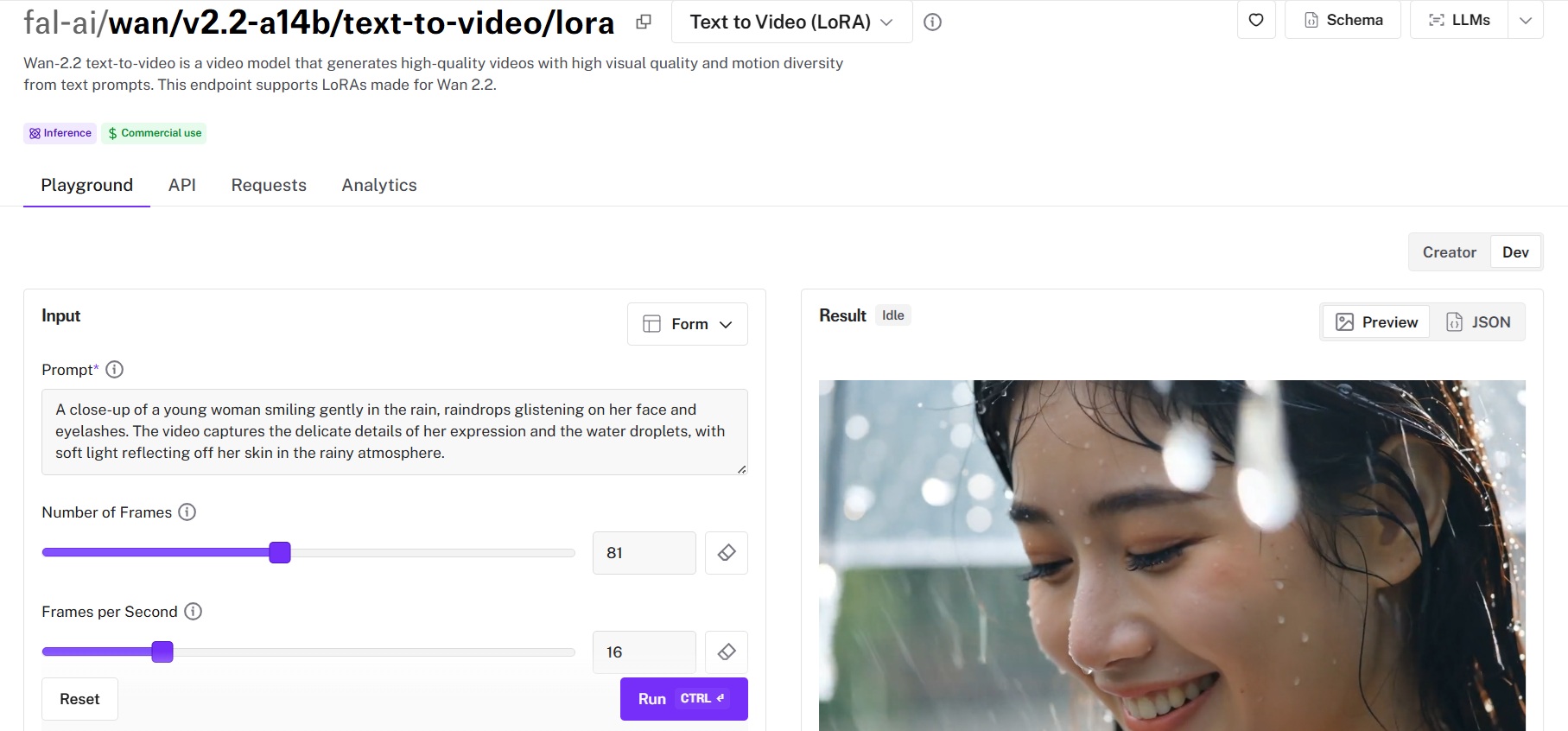

Wan 2.2 A14B

Best for: Budget-conscious teams and open-source advocates who want solid video quality at the lowest per-second cost on fal.

Similar to: Hailuo 2.3 Standard, Seedance 1.0 Pro Fast.

Wan 2.2 is an open-source image-to-video model with 14 billion parameters, and it punches well above its weight at this price. And because it's open-source, teams with their own GPU infrastructure can run it locally for even less.

Complex body movement, athletic actions, and detailed facial expressions are all noticeably better than the 2.1 version.

Performance

Generated using Wan 2.2 A14B on fal.

- Motion quality and consistency: Strong for the price tier. The upgrade from Wan 2.1 shows clearly in complex movements. Characters handle athletic actions and facial expressions more convincingly than before.

- Output resolution and length: 480p, 580p, and 720p options. No 1080p option, which is the main trade-off for the lower price point.

- Generation speed and cost: The model costs $0.1 per second to run.

- Camera and scene control: Supports start and end image inputs. Cinema-grade aesthetic keywords for controlling lighting, color, and cinematic style directly in the prompt.

- Audio and lip-sync support: Visual-only output, so audio needs to be added separately.

How to Run Wan 2.2 A14B on fal

Available through fal's API and playground at fal. Text-to-video and image-to-video endpoints are both live.

On fal, same integration pattern as everything else.

Pricing

Here's how much it costs to run Wan 2.2 A14B on fal: $0.1 per second.

Pros & Cons

Pros:

- Affordable option on this list at $0.1 per second for 720p.

- Open-source, so you can self-host for even lower costs at scale.

- Cinema-grade aesthetic controls for lighting and color grading baked into the prompt system.

Cons:

- Maxes out at 720p, positioning it as a draft and preview tool rather than a final-render solution.

- Best suited for single-character scenes, as multi-character compositions are where Kling and Veo pull ahead.

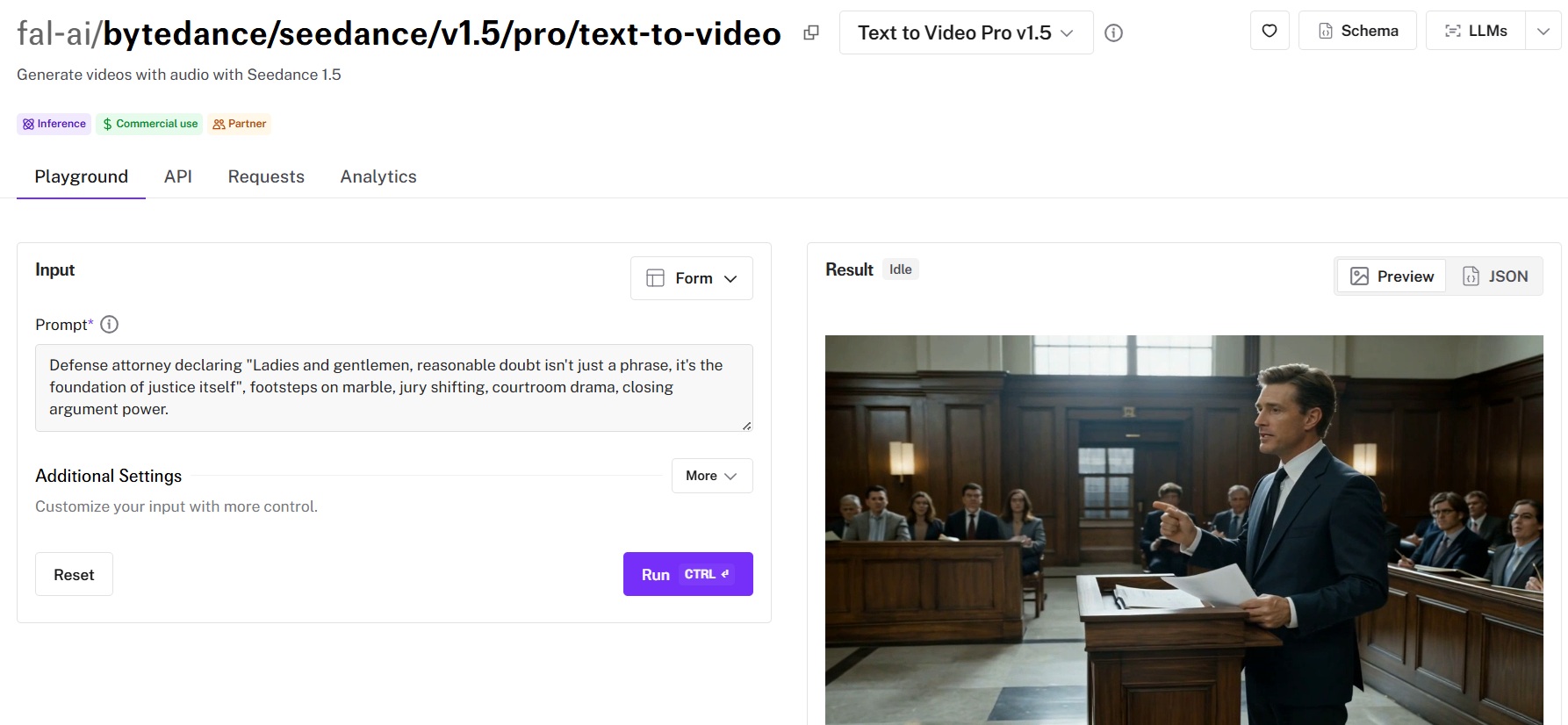

Seedance 1.5 Pro

Best for: Teams that need audio-enabled video generation with start and end frame control from ByteDance's video generation ecosystem.

Similar to: Kling 2.5 Turbo Pro, Veo 3.1.

Seedance 1.5 Pro adds the feature most teams have been waiting for: native audio support. That puts it in a small club alongside Veo 3.1 and Sora 2 Pro that can generate synchronized sound directly from the prompt.

It also supports start and end frame inputs. For storyboard-to-video workflows where you already have keyframes locked in, this is useful.

Performance

Generated using Seedance 1.5 Pro on fal, an AI video model from ByteDance.

- Motion quality and consistency: Solid motion generation with good temporal consistency.

- Output resolution and length: Standard clip lengths with both text-to-video and image-to-video endpoints. The 1.5 release builds on the 1.0 Pro foundation with improved quality.

- Generation speed and cost: Each 720p 5-second video with audio will cost you roughly $0.26. For other resolutions, 1 million video tokens with audio costs $2.4. Without audio, the price is $1.2 per million tokens.

- Camera and scene control: Start and end frame inputs for keyframe-guided generation. Text-based camera and motion control through natural language prompts work well for standard use cases.

- Audio and lip-sync support: Native audio generation is the headline feature of the 1.5 Pro release. Synchronized sound from the same prompt. This puts it in Veo 3.1 and Sora 2 Pro territory for audio-visual content.

How to Run Seedance 1.5 on fal

Available through fal's API and playground at fal.

Text-to-video and image-to-video endpoints (with start and end frame support) are both live. Same integration pattern as everything else on fal. One-line endpoint change if you're already running Kling or Hailuo.

Pricing

Running Seedance 1.5 Pro on fal would cost you $0.26 for a 720p 5-second video with audio.

As for other resolutions, 1 million video tokens with audio costs $2.4. Without audio, the price is $1.2 per million tokens.

Here's how tokens (video) are calculated: (height x width x FPS x duration) / 1024.

Pros & Cons

Pros:

- Native audio generation, one of only a few models on this list with that capability.

- Start and end frame inputs for precise keyframe-guided animation.

- Strong human motion generation, particularly dance and athletic sequences.

Cons:

- Newer model with less community testing than Kling or Veo, so documentation and edge-case handling are still developing.

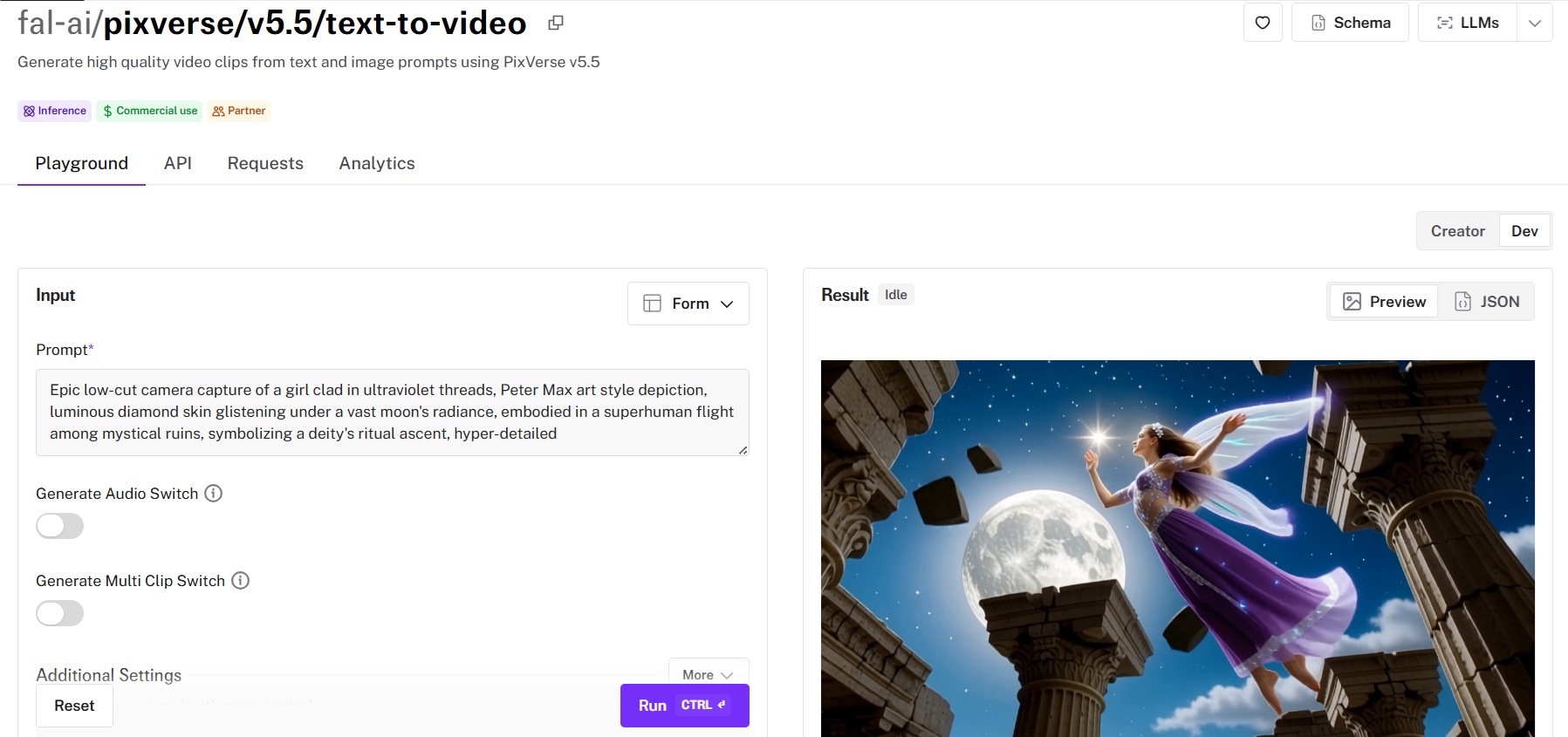

PixVerse v5.5

Best for: Creators focused on stylized content, visual effects, and creative transitions rather than strict photorealism.

Similar to: Hailuo 2.3, Seedance 1.0.

PixVerse v5.5 takes a different approach from most models on this list. Where Veo and Sora chase photorealism and cinematic fidelity, PixVerse leans into stylized output, visual effects, and creative transitions.

Performance

Generated using PixVerse v5.5 on fal.

- Motion quality and consistency: Excels with stylized content, while Kling and Veo remain the stronger choices for photorealistic motion.

- Output resolution and length: 360p, 540p, 720p, and 1080p options with 5-second clips as the standard.

- Generation speed and cost: For a 5-second video, it will cost $0.15 for 360p and 540p, $0.2 for 720p and $0.4 for 1080p.

- Camera and scene control: The Effects and Transition endpoints are the standout features here. Effects applies stylized visual treatments during generation. Transitions animates smoothly between two provided images.

- Audio and lip-sync support: Visual-only output, so audio needs to be added separately.

How to Run PixVerse v5.5 on fal

Available through fal's API and playground at fal.

Four endpoint types: text-to-video, image-to-video, effects, and transitions. Same integration pattern as every other model on fal.

Pricing

Here's how much it costs to run PixVerse v5.5 on fal:

- 5-second video at 360p or 540p: $0.15.

- 5-second video at 720p: $0.20.

- 5-second video at 1080p: $0.40.

Pros & Cons

Pros:

- Dedicated Effects and Transition endpoints that no other model on this list offers.

- Flat per-video pricing makes budgeting predictable.

- Strong stylized output for social media and short-form creative content.

Cons:

- Optimized for stylized aesthetics rather than photorealism, so teams needing cinematic fidelity will get better results from Kling or Veo.

- Focused on visual effects and stylization rather than audio-visual output.

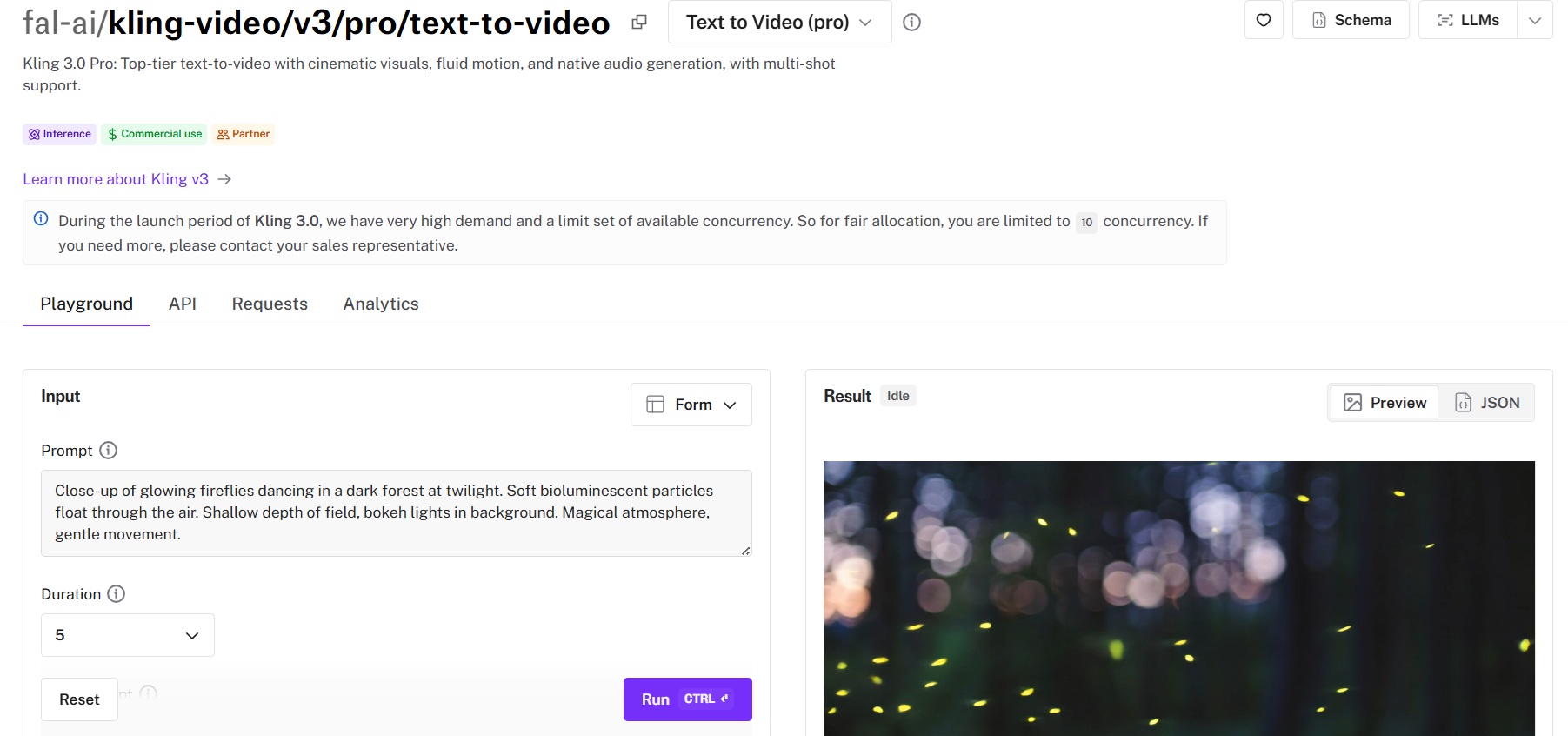

Kling 3.0 Pro (v3)

Best for: Teams that want the latest Kling model with native audio generation, multi-shot support, and custom element control.

Similar to: Kling 2.5 Turbo Pro, Veo 3.1.

Kling 3.0 Pro brings two things the 2.5 Turbo line was missing: native audio and multi-shot support.

Native audio means you no longer need to pair Kling with MMAudio or another audio model for sound. Multi-shot support means you can generate multiple connected scenes from a single prompt, maintaining visual consistency across shots.

That's a feature filmmakers and narrative content creators have been asking for since AI video generators started producing single-shot clips.

Performance

Generated using Kling 3.0 Pro (v3) on fal, an AI video model from Kuaishou.

- Motion quality and consistency: Inherits Kling's signature motion fluidity. Characters move naturally, camera work feels cinematic, and the multi-shot feature maintains visual consistency across scene cuts.

- Output resolution and length: Up to 1080p between 3 and 15 seconds. Multi-shot generation means you can produce longer narrative sequences across multiple connected clips.

- Generation speed and cost: For every second of video you generate, you will be charged $0.224 (audio off) or $0.336 (audio on). If voice control is used while generating audio, you will be charged $0.392.

- Camera and scene control: Multi-shot support is the standout. Custom element input lets you lock in specific characters and objects for consistency. Start and end frame inputs are also available.

- Audio and lip-sync support: Native audio generation. This is the first Kling model with built-in audio, and it brings Kling into direct competition with Veo 3.1 and Sora 2 Pro on audio-visual content.

How to Run Kling 3.0 Pro on fal

Available through fal's API and playground at fal. Text-to-video, image-to-video, and reference-to-video endpoints are available.

Same integration pattern as every other Kling model on fal. If you're already running Kling 2.5 Turbo, switching is a one-line endpoint change.

Pricing

Here's how much it costs to run Kling 3.0 Pro (v3) on fal:

- For every second of video you generate, you will be charged $0.224 with audio off or $0.336 with audio on.

- If voice control is used while generating audio, you will be charged $0.392.

- For example, a 5-second video with audio on and voice control will cost $1.96.

Pros & Cons

Pros:

- Native audio.

- Multi-shot support for connected scenes with visual consistency across cuts.

- Custom element input for character and object consistency.

Cons:

- Audio generation repeated the word "pure" twice in my test, suggesting audio precision is still maturing.

- New release, so community testing and documentation are still developing.

Vidu Q3

Best for: Teams exploring newer video generation models with both Pro (quality-first) and Turbo (speed-first) tiers for flexible workflow trade-offs.

Similar to: Hailuo 2.3, Seedance 1.5.

Vidu Q3 is the latest release in the Vidu family, and it takes a two-tier approach that's worth paying attention to. The Pro tier prioritizes output quality. The Turbo tier prioritizes generation speed.

This split is useful because most models on this list force you to choose one model that tries to balance both. With Vidu Q3, you can use Turbo for rapid iteration and drafting, then switch to Pro for final renders. Same endpoint family, same integration, different quality-speed tradeoff.

Performance

Generated using Vidu Q3 on fal.

- Motion quality and consistency: The Q3 Pro tier delivers solid motion quality that competes with Hailuo 2.3 Pro in the mid-tier bracket.

- Output resolution and length: Standard resolution options with clip durations from 1 to 16 seconds. Both text-to-video and image-to-video inputs are supported across Pro and Turbo tiers.

- Generation speed and cost: $0.035 per video second for 360p and 540p. The cost will be 2.2x for 720p and 1080p resolution.

- Camera and scene control: Standard prompt-based camera and motion control. The Q2 generation supported video extension (for extending existing clips), and Q3 is expected to carry that forward.

- Audio and lip-sync support: Has native audio generation capability.

How to Run Vidu Q3 on fal

Available through fal's API and playground at fal.

Four endpoints: text-to-video and image-to-video, each with Pro and Turbo variants. Same integration pattern as the rest of fal's catalog.

Pricing

Here's how much it'd cost to run Vidu Q3 on fal:

- $0.035 per video second for 360p and 540p.

- The cost will be 2.2x for 720p and 1080p resolution.

Pros & Cons

Pros:

- Dual Pro and Turbo tiers let you explicitly trade quality for speed based on your workflow stage.

- Both text-to-video and image-to-video on all tiers.

- Good value-for-money, including satisfactory audio and text on screen.

Cons:

- Tuned for speed and value rather than the cinematic polish of Kling or Veo.

- Newer model, so community testing and real-world documentation are still building compared to established options like Kling and Veo.

Recently Added

Generate Videos at Scale Through a Single API With fal

The AI video generation space has more capable models now than at any point in the past two years. And that's the real problem developers and marketers face: picking the right one requires testing, which costs time and credits.

If you want access to the best-performing models, such as Veo 3.1, Sora 2 Pro, Kling 3 Pro, Hailuo 2.3, and Wan 2.2, all through a single API with pay-per-use pricing and no GPU headaches, fal is the fastest way to get there. You can test any model in the playground or plug into the API in minutes.

![ControlLight is a LoRA fine-tune of FLUX.2 [klein] 9B that enhances low-light images while preserving scene structure and fine details, with a single alpha parameter that gives continuous control over enhancement strength from subtle to full brightening.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a9be8bb%2F8dTehLaCr78bz4vpkcZhb_a5bf209765d449658613b94c03976271.jpg/tr:w-1920,q-80/8dTehLaCr78bz4vpkcZhb_a5bf209765d449658613b94c03976271.webp)

![Outpainting generation with FLUX.2 [pro] from Black Forest Labs. Optimized for maximum quality, exceptional photorealism and artistic images.](https://refinery.fal.media/url/https%3A%2F%2Fv3b.fal.media%2Ffiles%2Fb%2F0a9a3cce%2F-REF_qGgpSuwSJ0NGjEYo_d68ad106f4174e0d8fb68c3551e6bc86.jpg/tr:w-1920,q-80/-REF_qGgpSuwSJ0NGjEYo_d68ad106f4174e0d8fb68c3551e6bc86.webp)