Uni-1Less Artificial. More Intelligent.

Luma's multimodal reasoning model that generates pixels. Built on Unified Intelligence, Uni-1 understands intention, responds to direction, and thinks with you.

What Makes UNI-1 Different

Common-Sense Scene Completion and Spatial Reasoning

Uni-1 reasons about what it sees before generating. It fills in scenes with plausible detail, understands spatial relationships between objects, and applies transformations that respect the logic of the image. Not just pattern matching, but visual reasoning.

Reference-Guided Generation With Source-Grounded Controls

Provide one or more reference images and Uni-1 follows your direction. Multi-reference input supports up to 8 images, combining characters, objects, and scenes coherently. Source-grounded controls let you steer generation without losing fidelity to your references.

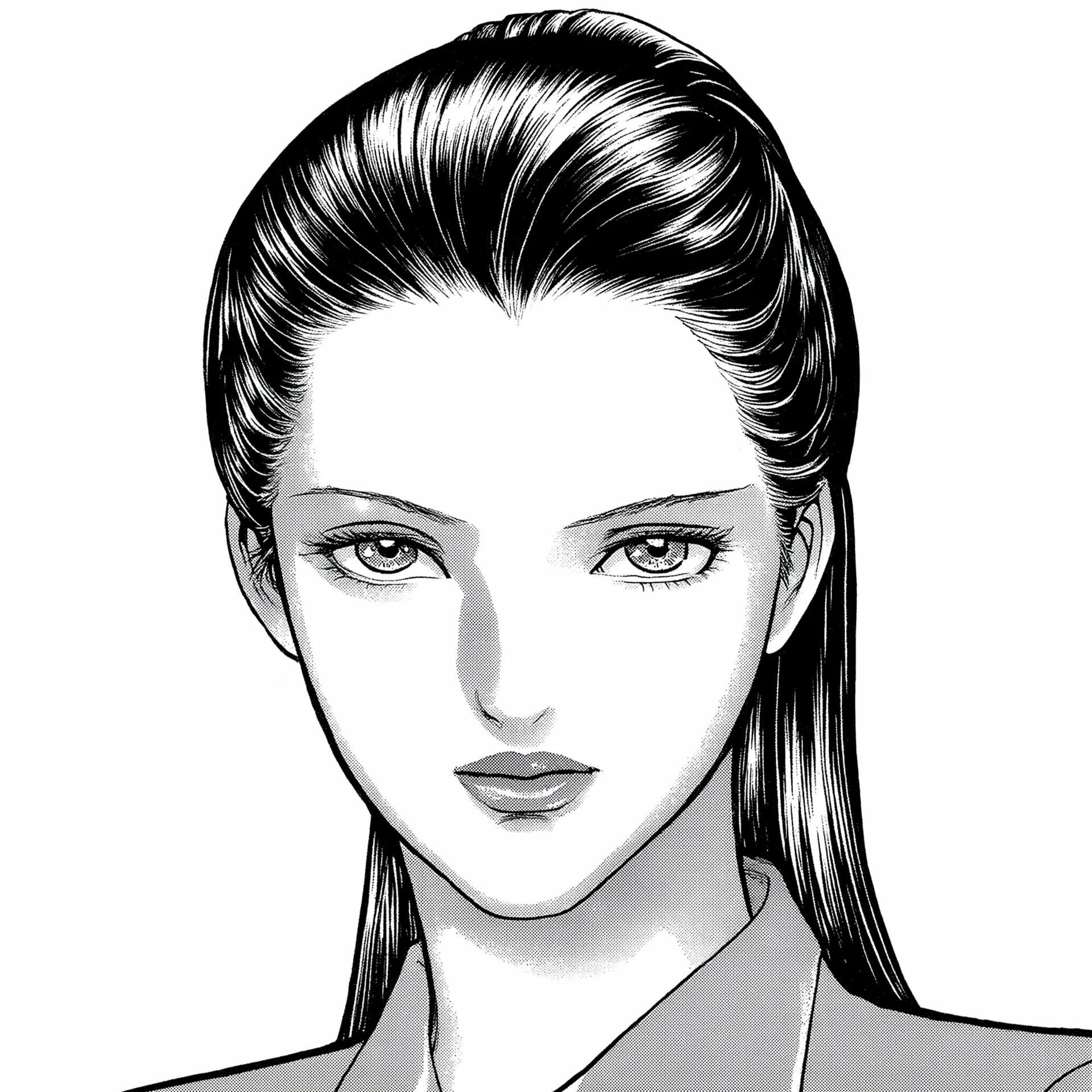

Culture-Aware Visual Generation Across Aesthetics

Uni-1 understands diverse visual traditions. It generates across styles from photorealism to manga, webtoon, and meme aesthetics. Character consistency holds across panels and frames, making it suited for sequential art, brand assets, and culturally specific creative work.

Uni-1 Examples

From photorealistic scenes to stylized illustrations, every example below was generated from a single text prompt.

"A cozy bookshop interior with warm amber lighting, floor-to-ceiling wooden shelves packed with vintage books, a cat napping on a reading chair"

"Underwater scene with bioluminescent jellyfish drifting through a coral reef, deep ocean blue with scattered light rays from the surface"

"A street food market in Tokyo at dusk, paper lanterns glowing above steaming stalls, crowds browsing between narrow alleys"

"Portrait of an elderly craftsman in a leather workshop, hands stained with dye, soft window light illuminating tools and half-finished bags"

"A tabby cat wearing tiny round glasses sitting behind a miniature therapy desk, notepad and pen in front, warm office lighting"

"Anime-style portrait of a young woman with dark hair and soft features, webtoon aesthetic, clean linework, pastel color palette"

Industries Building with Uni-1

From concept art to product photography, teams across industries are building with Uni-1 on fal.

Concept Art and Visual Exploration

Generate concept art, mood boards, and visual explorations from text descriptions. Multi-reference input lets designers combine styles and subjects to iterate faster than traditional workflows.

Product Photography and Lifestyle Shots

Create product imagery in different settings and styles without a physical photoshoot. Place products in realistic scenes, change backgrounds, and generate lifestyle shots at scale.

Illustration and Sequential Art

Generate illustrations with consistent characters across pages and panels. Webtoon, manga, and comic-style generation with character reference support for maintaining identity across frames.

Campaign Visuals and Brand Assets

Produce campaign imagery, social media visuals, and brand assets that match specific aesthetic guidelines. Culture-aware generation ensures visuals resonate with target audiences across markets.

Space Visualization and Staging

Transform architectural plans and empty spaces into fully staged environments. Image editing capabilities let you swap materials, furniture, and lighting to explore design options in context.

Character Design and World Building

Design characters, environments, and props with spatial reasoning that produces coherent scenes. Multi-reference generation helps maintain visual consistency across assets for games, film, and animation.

Common questions about Uni-1

What is Uni-1?

Uni-1 is Luma's multimodal reasoning model built on Unified Intelligence. It understands intention, responds to direction, and thinks before generating pixels. It handles text-to-image, image editing, and multi-reference generation at up to 2048px resolution.

What can I do with Uni-1?

Uni-1 supports text-to-image generation, image-to-image editing, and multi-reference generation with up to 8 input images. It excels at common-sense scene completion, spatial reasoning, culture-aware visual creation, and character consistency across styles like webtoon and manga.

What is multi-reference generation?

Multi-reference generation lets you provide multiple input images (up to 8) as references. Uni-1 combines them coherently into a single output, understanding spatial relationships and visual context across all references. This is useful for combining characters, objects, and scenes from different sources.

What makes Uni-1 different from other image models?

Uni-1 is built on Unified Intelligence, which means it reasons about what it sees before generating. It handles common-sense scene completion, spatial reasoning, and plausibility-driven transformation. It's also culture-aware, supporting diverse aesthetics from photorealism to manga and webtoon styles.

What resolution does Uni-1 support?

Uni-1 generates images at up to 2048px resolution for text-to-image and image editing tasks.

How do I get access?

Uni-1 is coming soon on fal.ai. Contact sales to get early access and be notified when the API is live.

Can I use Uni-1 for commercial projects?

Yes. Output generated through the fal.ai API can be used in commercial projects. Check fal.ai's terms of service for full details on usage rights and licensing.

Get in Touch About Uni-1

Want to learn more about integrating Uni-1 into your workflow? Leave your details and our team will reach out.